Notebook to demonstrate TAO-Remote Client AutoML workflow for Image Classification¶

Transfer learning is the process of transferring learned features from one application to another. It is a commonly used training technique where you use a model trained on one task and re-train to use it on a different task. Train Adapt Optimize (TAO) Toolkit is a simple and easy-to-use Python based AI toolkit for taking purpose-built AI models and customizing them with users' own data.

Learning Objective¶

This AutoML notebook applies to identifying the optimal hyperparameters (e.g., learning rate, batch size, weight regularizer, number of layers, etc.) in order to obtain better accuracy results or converge faster on AI models for classification application.

- Take a pretrained model and choose automl algorithm/parameters to start AutoML train.

- At the end of an AutoML run, you will receive a config file that specifies the best performing model, along with the binary model file to deploy it to your application.

The workflow in a nutshell¶

- Creating train and eval dataset

- Upload datasets to the service

- Set AutoML algorithm configurations

- Add/Remove AutoML parameters

- Override train config defaults

- Run AutoML

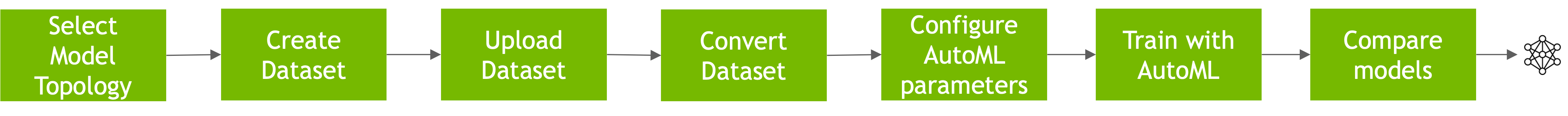

AutoML Workflow¶

User starts with selecting model topology, create and upload dataset, configuring parameters, training with AutoML to comparing the model.

Table of contents¶

- Install TAO remote client

- Set the remote service base URL

- Access the shared volume

- Create the datasets

- List datasets

- Create a model experiment

- Find pretrained model

- Set AutoML related configurations

- Provide train specs

- Run AutoML train

- Get the best model from AutoML

- Delete experiment

- Delete datasets

- Unmount shared volume

Requirements¶

Please find the server requirements here