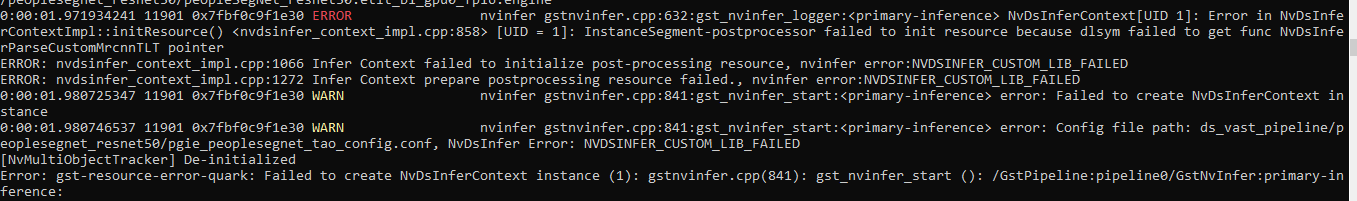

Hi, I was trying to run PeopleSegNet(MaskRCNN) TAO(.etlt) model in deepstream. config file which is provided here(deepstream_tao_apps/configs/peopleSegNet_tao at master · NVIDIA-AI-IOT/deepstream_tao_apps · GitHub) and have build and replaced “libnvinfer_plugin.so*” as per given in this doc(deepstream_tao_apps/TRT-OSS/x86 at master · NVIDIA-AI-IOT/deepstream_tao_apps · GitHub). I was unable to run the model, please find the below screenshot for the error message. Could you please help us resolve this issue.

DeepStream config file: deepstream_tao_apps/pgie_peopleSegNet_tao_config.txt at master · NVIDIA-AI-IOT/deepstream_tao_apps · GitHub

Model: PeopleSegNet TAO model which is downloaded from https://api.ngc.nvidia.com/v2/models/nvidia/tao/peoplesegnet/versions/deployable_v1.0/zip.

Labels file : deepstream_tao_apps/peopleSegNet_labels.txt at master · NVIDIA-AI-IOT/deepstream_tao_apps · GitHub

Hardware Platofrm: Tesla T4

DeepStream Version: 6.0

TensorRT Version : 8.0.1

CUDA Version: 11.3

Thanks