Hey, I’m trying to profile remotely a custom binary that runs in a container.

I have a k3s cluster running a pod with a container that has a binary. The binary runs under a L4T image. I have also added SSH access to this container, so the Dockerfile looks like this:

FROM nvcr.io/nvidia/l4t-base:r32.6.1

ENV DEBIAN_FRONTEND=noninteractive

RUN apt-get update -y && \

DEBIAN_FRONTEND=noninteractive apt-get install -y --no-install-recommends \

apt-transport-https \

ca-certificates \

gnupg \

software-properties-common \

openssh-server \

apt-transport-https \

curl \

vim \

wget && \

rm -rf /var/lib/apt/lists/*

## SSH starts

RUN mkdir -p /greeneye/config/ && \

chown -R root:root /greeneye && \

chmod -R 0700 /greeneye && \

chown root:root -R /root

# SSH login fix. Otherwise user is kicked off after login

RUN sed 's@session\s*required\s*pam_loginuid.so@session optional pam_loginuid.so@g' -i /etc/pam.d/sshd

# CUDA environment is not passed by default to the SSH session. One has to export it in /etc/profile/

ENV PATH /usr/local/cuda-10.1/bin:$PATH

# https://stackoverflow.com/a/64472380/554540

ENV LD_LIBRARY_PATH /usr/local/cuda-10.1/lib64:/usr/local/cuda-10.2/lib64:$LD_LIBRARY_PATH

RUN echo "export PATH=$PATH" >> /etc/profile && \

echo "ldconfig" >> /etc/profile

ENV NOTVISIBLE "in nsight profile"

RUN echo "export VISIBLE=now" >> /etc/profile

RUN ssh-keygen -P "" -t dsa -f /etc/ssh/ssh_host_dsa_key

EXPOSE 9022

RUN wget -qO - https://developer.download.nvidia.com/devtools/repos/ubuntu2004/arm64/nvidia.pub | apt-key add - && \

echo "deb https://developer.download.nvidia.com/devtools/repos/ubuntu2004/arm64/ /" >> /etc/apt/sources.list.d/nsight.list && \

apt-get update -y && \

DEBIAN_FRONTEND=noninteractive apt-get install -y --no-install-recommends \

nsight-compute-2021.3.1 nsight-systems-cli && \

rm -rf /var/lib/apt/lists/*

ENV PATH="/opt/nvidia/nsight-compute/2021.3.1:${PATH}"

COPY entrypoint.sh entrypoint.sh

RUN chmod +x entrypoint.sh

RUN mkdir /var/run/sshd

RUN echo "root:docker"|chpasswd

COPY sshd_config /etc/ssh/sshd_config

COPY scripts /greeneye/scripts

RUN chmod +x /greeneye/scripts/*.sh

ENTRYPOINT /greeneye/scripts/wrapper.sh

warpper.sh

#!/bin/bash

# Start the first process

exec /greeneye/scripts/run-ssh-service.sh &

status=$?

if [ $status -ne 0 ]; then

echo "Failed to start run-ssh-service: $status"

exit $status

fi

# Start the second process

exec /greeneye/scripts/run-detector-service.sh &

status=$?

if [ $status -ne 0 ]; then

echo "Failed to start run-detector-service: $status"

exit $status

fi

# Naive check runs checks once a minute to see if either of the processes exited.

# This illustrates part of the heavy lifting you need to do if you want to run

# more than one service in a container. The container exits with an error

# if it detects that either of the processes has exited.

# Otherwise it loops forever, waking up every 60 seconds

while sleep 60; do

ps aux |grep run-ssh-service |grep -q -v grep

PROCESS_1_STATUS=$?

ps aux |grep run-detector-service |grep -q -v grep

PROCESS_2_STATUS=$?

# If the greps above find anything, they exit with 0 status

# If they are not both 0, then something is wrong

if [ $PROCESS_1_STATUS -ne 0 ]; then

echo "SSH service process exited"

exit 1

fi

if [ $PROCESS_2_STATUS -ne 0 ]; then

echo "detector service process exited"

exit 1

fi

done

run-ssh-service.sh

chmod 700 /greeneye/config

chmod 600 /greeneye/config/*

chmod 644 -f ~/.ssh/known_hosts

chown -R root:root /greeneye/config

/usr/sbin/sshd -D -e

run-detector-service.sh

#!/bin/bash

/bin/sh -c \

"./Detector"

pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: ubuntu-pod-playground

spec:

containers:

- name: mypod

image: myimage

env:

- name: LD_PRELOAD

value: /opt/nvidia/nsight_systems/libToolsInjectionProxy64.so

- name: CUDA_INJECTION64_PATH

value: /opt/nvidia/nsight_systems/libToolsInjection64.so

- name: QUADD_INJECTION_PROXY

value: OSRT, $QUADD_INJECTION_PROXY

imagePullPolicy: Always

name: detector

securityContext:

privileged: true

capabilities:

add:

- SYS_ADMIN

Usage

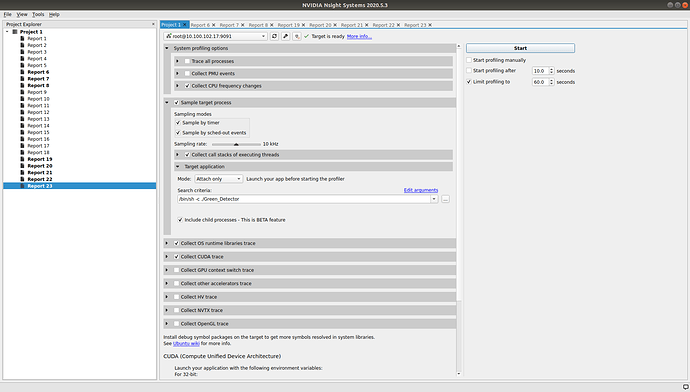

From the host computer - Ubuntu, which I used to install Jetpack (currently 4.5.1), I use Nsight Systems 2020.5.3.

It has been quite difficult to follow the documentation, has some state that one can only use Attach with remote SSH and some state that it’s possible to launch remotely. I only tried to attach, but it keeps showing errors messages such as:

Failed to connect to the application. Has it been run with Injection library?

CUDA profiling might have not been started correctly.

No CUDA events collected. Does the process use CUDA?

In some cases I see the following error as well, but not always:

Event requestor failed: Source ID=

Type=ErrorInformation (18)

Properties:

ErrorText (100)=Throw location unknown (consider using BOOST_THROW_EXCEPTION)

Dynamic exception type: boost::exception_detail::clone_impl

std::exception::what: ConvertEventError

[QuadDDaemon::tag_error_code*] = 55

Using LD_PRELOAD=/opt/nvidia/nsight_systems/libToolsInjectionProxy64.so at start doesn’t make much sense as it produces an error:

ERROR: ld.so: object ‘/opt/nvidia/nsight_systems/libToolsInjectionProxy64.so’ from LD_PRELOAD cannot be preloaded (cannot open shared object file): ignored.

This happens because /opt/nvidia/nsight_systems is being created by the host nsight systems.

I did check Include child processes, which seems to be required in my case.

Questions

- It is possible to use containers to run this scenario?

- What am I missing?

- Do I need to run the binary with

ncue.gncu ./Detectorinstead?