Hello Forum!

I am trying to do the following simple operations on some tall skinny matrices (# of rows >> # of cols):

blockVectorR = blockVectorX * lambda

blockVectorR = blockVectorX - blockVectorR

activeBlockVectorR = get the active columns of blockVectorR(see activeMask, column i is active if activeMask[i] = 1)

XtactR = blockVectorX’* activeBlockVectorR

[Dimension: blockVectorR, blockVectorX => Mblocksize, activeBlockVectorR => McurrentBlockSize, lambda => blocksizeblocksize, XtactR => blocksizecurrentBlockSize]

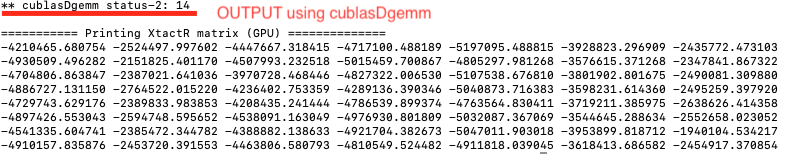

I am using both cublasDgemm routine and OpenMP target offloading for this purpose. But the weird thing is, the very last cublasDgemm call [which is doing transpose(X)*Y type operation] is giving me CUBLAS_STATUS_INTERNAL_ERROR. Though the the final output of the following codeblock matches with two other implementation of the same oprations [ one is using Intel MKL library & cpu kernels and the other one is using NVBLAS dgemm routine and same GPU kernels in following codeblock]. I have attached the outputs here.

Can anyone please point me what am I doing wrong? Or am I missing something here? I really appreciate your help.

Thanks

Fazlay

#include <stdio.h>

#include <stdlib.h>

#ifdef USE_CUBLAS

#include <cuda_runtime.h>

#include "cublas_v2.h"

#endif

#define DGEMM_RESTRICT __restrict__

//Custom kernels for GPU

void getActiveBlockVector_GPU_v2(double *activeBlockVectorR, int *activeMask, double *blockVectorR, int M, int blocksize, int currentBlockSize);

void mat_sub_GPU_v2(double *src1, double *src2, double *dst, const int row, const int col);

//Utility Kernel

void print_matrix(double *array, int row, int col);

int main()

{

int M = 4985944, blocksize = 8;

int i, j, k;

int currentBlockSize = 7;

const double cudaAlpha = 1.0;

const double cudaBeta = 0.0;

int activeMask[8];

activeMask[0] = 1 ; activeMask[1] = 1 ; activeMask[2] = 1 ; activeMask[3] = 1 ;

activeMask[4] = 1 ; activeMask[5] = 1 ; activeMask[6] = 1 ; activeMask[7] = 0;

double* DGEMM_RESTRICT blockVectorX = (double*) malloc(sizeof(double) * M * blocksize);

double* DGEMM_RESTRICT lambda = (double*) malloc(sizeof(double) * blocksize * blocksize);

double* DGEMM_RESTRICT blockVectorR = (double*) malloc(sizeof(double) * M * blocksize);

double* DGEMM_RESTRICT activeBlockVectorR = (double*) malloc(sizeof(double) * M * blocksize);

double* DGEMM_RESTRICT XtactR = (double*) malloc(sizeof(double) * blocksize * currentBlockSize);

double* DGEMM_RESTRICT cblas_XtactR = (double*) malloc(sizeof(double) * blocksize * currentBlockSize);

double* DGEMM_RESTRICT nvblas_XtactR = (double*) malloc(sizeof(double) * blocksize * currentBlockSize);

srand(0);

//#pragma omp paralle for default(shared) private(j)

for(i = 0 ; i < M ; i++)

{

for(j = 0 ; j < blocksize ; j++)

{

blockVectorX[i * blocksize + j] = (double)rand()/(double)RAND_MAX;

}

}

//#pragma omp paralle for default(shared) private(j)

for(i = 0 ; i < blocksize ; i++)

{

for(j = 0 ; j < blocksize ; j++)

{

lambda[i * blocksize + j] = (double)rand()/(double)RAND_MAX;

}

}

//======== offloading to GPU start ==========//

#if defined(USE_CUBLAS)

double *d_blockVectorX, *d_lambda, *d_blockVectorR, *d_activeBlockVectorR, *devPtrXtR, *devPtrXtactR;

int *d_activeMask;

cudaError_t cuberror;

cuberror = cudaMalloc ((void**)&d_blockVectorX, M * blocksize * sizeof(double));

if( cuberror != 0 ){ printf("cudaMalloc Filed d_blockVectorX\n"); return 0; }

cuberror = cudaMalloc ((void**)&d_lambda, blocksize * blocksize * sizeof(double));

if( cuberror != 0 ){ printf("cudaMalloc Filed d_lambda\n"); return 0; }

cuberror = cudaMalloc ((void**)&d_blockVectorR, M * blocksize * sizeof(double));

if( cuberror != 0 ){ printf("cudaMalloc Filed d_blockVectorR\n"); return 0; }

cuberror = cudaMalloc ((void**)&d_activeBlockVectorR, M * currentBlockSize * sizeof(double));

if( cuberror != 0 ){ printf("cudaMalloc Filed d_activeBlockVectorR\n"); return 0; }

cuberror = cudaMalloc ((void**)&devPtrXtR, blocksize * blocksize * sizeof(double));

if( cuberror != 0 ){ printf("cudaMalloc Filed d_blockVectorR\n"); return 0; }

cuberror = cudaMalloc ((void**)&devPtrXtactR, blocksize * currentBlockSize * sizeof(double));

if( cuberror != 0 ){ printf("cudaMalloc Filed devPtrXtactR\n"); return 0; }

cuberror = cudaMalloc ((void**)&d_activeMask, blocksize * sizeof(int));

if( cuberror != 0 ){ printf("cudaMalloc Filed d_activeMask\n"); return 0; }

cuberror = cudaMemcpy((void *)d_blockVectorX, blockVectorX, M * blocksize * sizeof(double), cudaMemcpyHostToDevice);

if( cuberror != 0 ){ printf("cudaMemcpy X ==> %d\n", cuberror); return 0; }

cuberror = cudaMemcpy((void *)d_activeMask, activeMask, blocksize * sizeof(int), cudaMemcpyHostToDevice);

if( cuberror != 0 ){ printf("cudaMemcpy d_activeMask ==> %d\n", cuberror); return 0; }

cuberror = cudaMemcpy((void *)d_lambda, lambda, blocksize * blocksize * sizeof(double), cudaMemcpyHostToDevice);

if( cuberror != 0 ){ printf("cudaMemcpy lambda ==> %d\n", cuberror); return 0; }

cublasStatus_t cubstat;

cublasHandle_t handle;

cublasStatus_t gemmStat;

cubstat = cublasCreate(&handle);

if( cubstat != CUBLAS_STATUS_SUCCESS ){ printf("HandleCreationFailure\n"); return 0; }

//blockVectorR = blockVectorX * lambda

gemmStat = cublasDgemm(handle, CUBLAS_OP_N, CUBLAS_OP_N, blocksize, M, blocksize,

&cudaAlpha, d_lambda, blocksize, d_blockVectorX, blocksize, &cudaBeta, d_blockVectorR, blocksize);

if(gemmStat != CUBLAS_STATUS_SUCCESS) {printf("** cublasDgemm status-1: %d\n", gemmStat);}

//blockVectorR = blockVectorX - blockVectorR

mat_sub_GPU_v2(d_blockVectorX, d_blockVectorR, d_blockVectorR, M, blocksize);

//activeBlockVectorR = get the active columns of blockVectorR(first 7 columns are active, see activeMask array)

getActiveBlockVector_GPU_v2(d_activeBlockVectorR, d_activeMask, d_blockVectorR, M, blocksize, currentBlockSize);

//XtactR = blockVectorX'* activeBlockVectorR

gemmStat = cublasDgemm(handle, CUBLAS_OP_N, CUBLAS_OP_T, currentBlockSize, blocksize, M,

&cudaAlpha, d_activeBlockVectorR, currentBlockSize, d_blockVectorX, blocksize, &cudaBeta, devPtrXtactR, currentBlockSize);

if(gemmStat != CUBLAS_STATUS_SUCCESS) {printf("** cublasDgemm status-2: %d\n", gemmStat);}

//copying result back to CPU

cuberror = cudaMemcpy(XtactR, devPtrXtactR, blocksize * currentBlockSize * sizeof(double), cudaMemcpyDeviceToHost);

if( cuberror != 0 ){ printf("cudaMemcpy Failed XtactR: %d\n", cuberror);}

printf("\n=========== Printing XtactR matrix (GPU) ==============\n");

print_matrix(XtactR, blocksize, currentBlockSize);

#endif

#if defined(USE_CUBLAS)

cudaFree (d_blockVectorX);

cudaFree (d_lambda);

cudaFree (d_blockVectorR);

cudaFree (devPtrXtR);

cublasDestroy(handle);

#endif

return 0;

}

//GPU custom kernels

void getActiveBlockVector_GPU_v2(double *activeBlockVectorR, int *activeMask, double *blockVectorR, int M, int blocksize, int currentBlockSize)

{

int i, j, k = 0;

#pragma omp target is_device_ptr(activeBlockVectorR, blockVectorR, activeMask)

#pragma omp teams distribute parallel for default(shared) private(j, k)

for(i = 0 ; i < M ; i++)

{

k = 0;

for(j = 0 ; j < blocksize ; j++)

{

if(activeMask[j] == 1)

{

activeBlockVectorR[i * currentBlockSize + k] = blockVectorR[i * blocksize + j];

k++;

}

}

}

}

void mat_sub_GPU_v2(double *src1, double *src2, double *dst, const int row, const int col)

{

int i, j;

#pragma omp target is_device_ptr(src1, src2, dst)

#pragma omp teams distribute parallel for private(j) default(shared)

for(i = 0; i < row ; i++)

{

for(j = 0 ; j < col ; j++)

{

dst[i * col + j] = src1[i * col + j] - src2[i * col + j];

}

}

}

//Utility kernel

void print_matrix(double *array, int row, int col)

{

int i, j;

for(i = 0; i < row ; i++)

{

for(j = 0 ; j < col ; j++)

{

printf("%lf ", array[i * col + j]);

}

printf("\n");

}

}