Hello,

i have decided to create this topic to show and explain my project.

First, i would like to apologize for my poor English (i am French).

So, Electronically Assisted Astronomy (EAA) is quite different from traditional astrophotography.

With EAA, camera replace your eye. That is to say you look at the sky with a camera instead of your eye and the purpose is not to make a beautiful picture of the sky (but it could be)but it is to simply observe the deep sky (watching sky on a screen or even make a video of your observations).

In July 2018, i decided to create my own EAA system. I wanted to make a full autonomous system i could control fro a laptop PC from my home (using VNC client) instead of being outside during very cold nights.

My EAA system is quite simple :

- a 2 axis mount with stepper motors

- an absolute gyroscope/compass to know where my camera looks at

- a planetary camera

- a lens

I made a prototype to test mechanical system, electronic, computer (SBC) and software solutions.

For my first try, i decided to use a Raspberry pi 3 B+ to control the mount and the camera. Concerning the software, i have chosen Python because :

- the last time i wrote a program, it was in 1994 and i used Turbo Pascal language so my software knowledge is not really up to date !

- Python is quite simple language and i wanted to get results quite quickly

So, i wrote 2 programs :

- the first program can control the camera, apply real time filters on the capture frames and save videos

- the second program controls the mount, allow manual control of the mount with a joystick and get informations from the gyroscope/compass.

It took me about 5 months to write the programs and create the whole system.

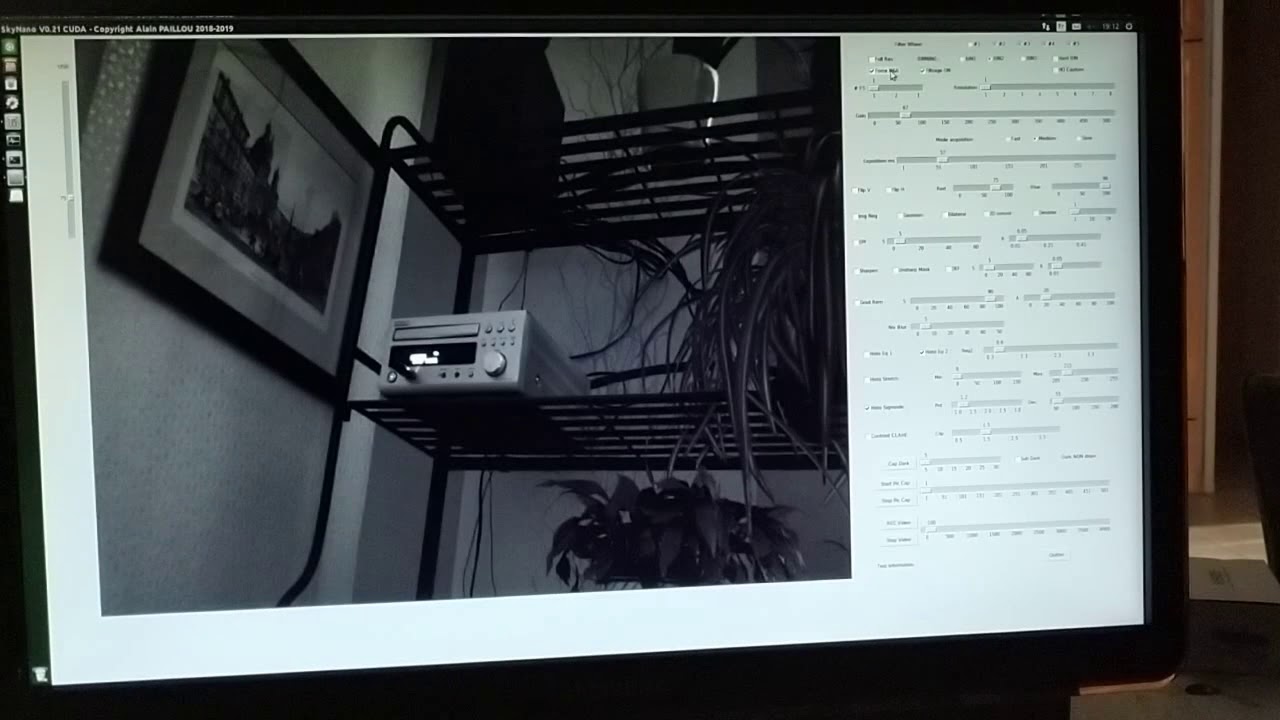

Here is what my capture software looks like :

And here is what my system looks like :

The system is inspired from the 2 meters telescope on the Pic du Midi in France :

- Up, we have the mount (motors), the optic (an old but good Canon FD 50mm F/D 1.4 and the camera,

- below, we have electronic and computer

- on the basement, we have the power stage.

Here is the system with its case :

The system can communicate with my laptop PC through WIFI or Broadband over power line.

Don’t forget this is a prototype. I have learned a million things building this prototype and i am planing to make a new version, more strong, precise and i hope definitive version.

But for the coming months, software is my priority.

Why real time filtering of the capture frames ?

Planetary camera does quite well the job but as deep sky objects are not really bright objects, we have to rise the camera gain quite high (to avoid long exposure time ; EAA needs small exposure time) and therefore, you have very bad and noisy image.

We also have to manage light pollution to get a sky as dark as possible but we have to preserve deep sky objects light.

Of course, i could make a post capture treatments but it is not the EAA philosophy. EAA means real time visualisation so filters must be real time filters.

Raspberry pi 3 B+ realize real time filtering to improve video quality.

The camera is a ZWO ASI178MC using Sony IMX178 colour sensor. It’s a very good planetary camera.

I am in touch with ZWO to try to find a better camera. As they are interested with my project, maybe they will be ok to provide me a new camera with better sensor to try to improve this EAA system.

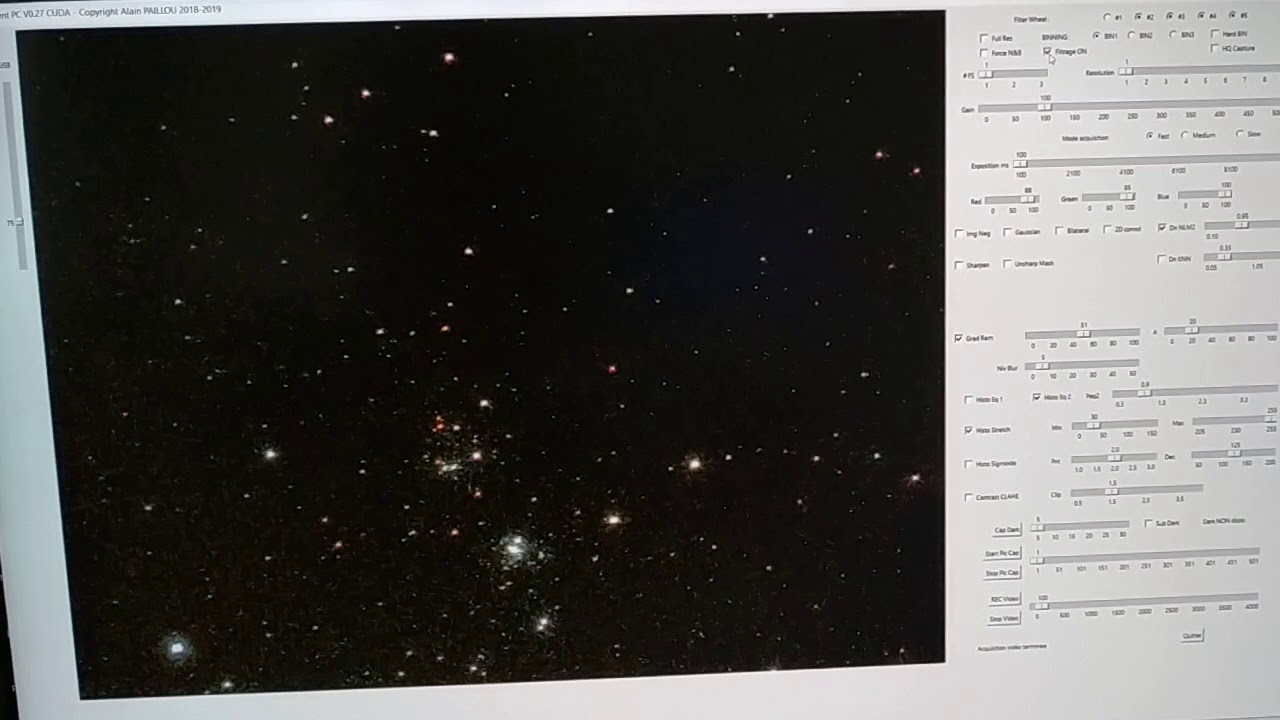

You can see some examples here :

There is still some improvements to make but the results are interesting.

Real time filtering needs very good CPU and raspberry is not the best for that kind of work. So i decided to get a more powerful SBC.

Some SBC get much better CPU like Odroid N2 which get BIG.little CPU. I tested one and the result is much better than raspberry pi but it there are still so problems :

- the Mali GPU can’t be used and all the work is made by the CPU !

- the software support is really really weak.

So, i decided to ask Nvidia if they could support my project and they said YES. THat’s really really cool.

So, with Jetson Nano, i will try to get the Maxwell GPU working in order to get much better real time treatments.

This is really interesting for my project. But i will have very heavy work to do because :

- i will probably have to rewrite my acquisition software (camera control) using C++

- i will have to learn CUDA or at least be able to use OpenCV with CUDA optimisations.

It is very heavy work but also very interesting work. And this time, i will have all Nvidia experience and knowledge with me (something that Odroid can’t bring to me). This can make all the difference.

If my works brings some interesting results, it could be use on more powerful platforms (like PC Windows 10 with very good Nvidia GPU).

This is important. You know, astrophotography (mainly planets or Moon captures) needs very high frame rate and actually, it is not compatible with real time filtering to improve acquisition quality (raw captures are really bad and noisy). Sensors are getting better and better but real time filtering could really improve sensor captures.

So, i am waiting for Jetson Nano coming home and i will start to learn C++ and Cuda, hoping for very interesting and good results. I will probably ask a million questions on Jetson Nano forum but i am sure we will find an answer for every question.

The best is to come.

Alain