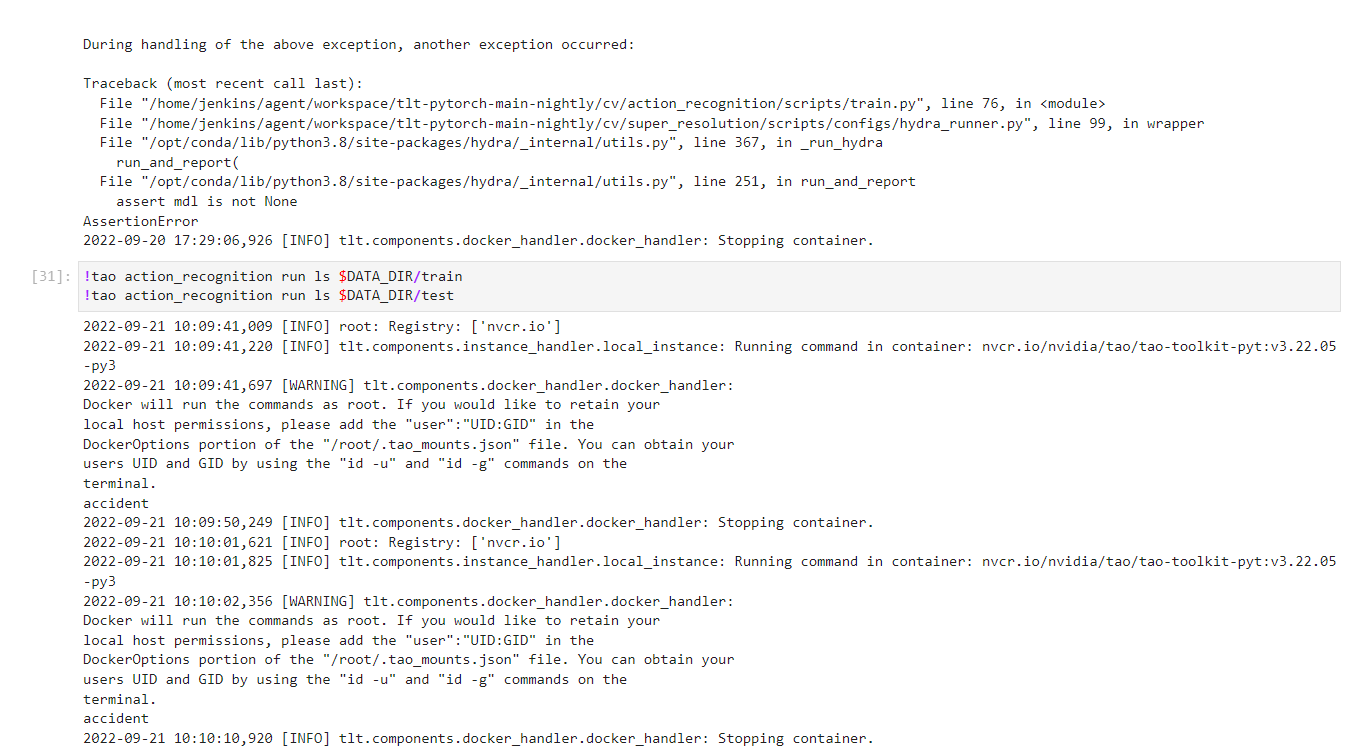

I follow default notebook and use the dataset mentioned in the notebook still getting the same error

Train RGB only model with PTM

2022-09-21 11:27:32,925 [INFO] root: Registry: ['nvcr.io']

2022-09-21 11:27:33,120 [INFO] tlt.components.instance_handler.local_instance: Running command in container: nvcr.io/nvidia/tao/tao-toolkit-pyt:v3.22.05-py3

2022-09-21 11:27:33,672 [WARNING] tlt.components.docker_handler.docker_handler:

Docker will run the commands as root. If you would like to retain your

local host permissions, please add the "user":"UID:GID" in the

DockerOptions portion of the "/root/.tao_mounts.json" file. You can obtain your

users UID and GID by using the "id -u" and "id -g" commands on the

terminal.

/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/action_recognition/scripts/train.py:76: UserWarning:

'train_rgb_3d_finetune.yaml' is validated against ConfigStore schema with the same name.

This behavior is deprecated in Hydra 1.1 and will be removed in Hydra 1.2.

See https://hydra.cc/docs/next/upgrades/1.0_to_1.1/automatic_schema_matching for migration instructions.

loading trained weights from /results/pretrained/actionrecognitionnet_vtrainable_v1.0/resnet18_3d_rgb_hmdb5_32.tlt

ResNet3d(

(conv1): Conv3d(3, 64, kernel_size=(5, 7, 7), stride=(2, 2, 2), padding=(2, 3, 3), bias=False)

(bn1): BatchNorm3d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool3d(kernel_size=(1, 3, 3), stride=2, padding=(0, 1, 1), dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): BasicBlock3d(

(conv1): Conv3d(64, 64, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(64, 64, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(1): BasicBlock3d(

(conv1): Conv3d(64, 64, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(64, 64, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer2): Sequential(

(0): BasicBlock3d(

(conv1): Conv3d(64, 128, kernel_size=(3, 3, 3), stride=(1, 2, 2), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(128, 128, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv3d(64, 128, kernel_size=(1, 1, 1), stride=(1, 2, 2), bias=False)

(1): BatchNorm3d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock3d(

(conv1): Conv3d(128, 128, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(128, 128, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer3): Sequential(

(0): BasicBlock3d(

(conv1): Conv3d(128, 256, kernel_size=(3, 3, 3), stride=(1, 2, 2), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(256, 256, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv3d(128, 256, kernel_size=(1, 1, 1), stride=(1, 2, 2), bias=False)

(1): BatchNorm3d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock3d(

(conv1): Conv3d(256, 256, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(256, 256, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer4): Sequential(

(0): BasicBlock3d(

(conv1): Conv3d(256, 512, kernel_size=(3, 3, 3), stride=(1, 2, 2), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(512, 512, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv3d(256, 512, kernel_size=(1, 1, 1), stride=(1, 2, 2), bias=False)

(1): BatchNorm3d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock3d(

(conv1): Conv3d(512, 512, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn1): BatchNorm3d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv3d(512, 512, kernel_size=(3, 3, 3), stride=(1, 1, 1), padding=(1, 1, 1), bias=False)

(bn2): BatchNorm3d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(avg_pool): AdaptiveAvgPool3d(output_size=(1, 1, 1))

(fc_cls): Linear(in_features=512, out_features=3, bias=True)

)

/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/connectors/callback_connector.py:147: LightningDeprecationWarning: Setting `Trainer(checkpoint_callback=False)` is deprecated in v1.5 and will be removed in v1.7. Please consider using `Trainer(enable_checkpointing=False)`.

rank_zero_deprecation(

GPU available: True, used: True

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

Train dataset samples: 140

Validation dataset samples: 60

LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0,1,2,3,4,5,6,7]

Adjusting learning rate of group 0 to 3.0000e-04.

| Name | Type | Params

--------------------------------------------

0 | model | ResNet3d | 33.2 M

1 | train_accuracy | Accuracy | 0

2 | val_accuracy | Accuracy | 0

--------------------------------------------

33.2 M Trainable params

0 Non-trainable params

33.2 M Total params

132.747 Total estimated model params size (MB)

/opt/conda/lib/python3.8/site-packages/pytorch_lightning/callbacks/model_checkpoint.py:623: UserWarning: Checkpoint directory /results/rgb_3d_ptm exists and is not empty.

rank_zero_warn(f"Checkpoint directory {dirpath} exists and is not empty.")

Validation sanity check: 0%| | 0/2 [00:00<?, ?it/s]Error executing job with overrides: ['output_dir=/results/rgb_3d_ptm', 'encryption_key=nvidia_tao', 'model_config.rgb_pretrained_model_path=/results/pretrained/actionrecognitionnet_vtrainable_v1.0/resnet18_3d_rgb_hmdb5_32.tlt', 'model_config.rgb_pretrained_num_classes=5']

Traceback (most recent call last):

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 211, in run_and_report

return func()

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 368, in <lambda>

lambda: hydra.run(

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/hydra.py", line 110, in run

_ = ret.return_value

File "/opt/conda/lib/python3.8/site-packages/hydra/core/utils.py", line 233, in return_value

raise self._return_value

File "/opt/conda/lib/python3.8/site-packages/hydra/core/utils.py", line 160, in run_job

ret.return_value = task_function(task_cfg)

File "/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/action_recognition/scripts/train.py", line 70, in main

File "/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/action_recognition/scripts/train.py", line 59, in run_experiment

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 737, in fit

self._call_and_handle_interrupt(

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 682, in _call_and_handle_interrupt

return trainer_fn(*args, **kwargs)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 772, in _fit_impl

self._run(model, ckpt_path=ckpt_path)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 1195, in _run

self._dispatch()

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 1274, in _dispatch

self.training_type_plugin.start_training(self)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/plugins/training_type/training_type_plugin.py", line 202, in start_training

self._results = trainer.run_stage()

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 1284, in run_stage

return self._run_train()

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 1306, in _run_train

self._run_sanity_check(self.lightning_module)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/trainer/trainer.py", line 1370, in _run_sanity_check

self._evaluation_loop.run()

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/loops/base.py", line 145, in run

self.advance(*args, **kwargs)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/loops/dataloader/evaluation_loop.py", line 109, in advance

dl_outputs = self.epoch_loop.run(dataloader, dataloader_idx, dl_max_batches, self.num_dataloaders)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/loops/base.py", line 140, in run

self.on_run_start(*args, **kwargs)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/loops/epoch/evaluation_epoch_loop.py", line 86, in on_run_start

self._dataloader_iter = _update_dataloader_iter(data_fetcher, self.batch_progress.current.ready)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/loops/utilities.py", line 121, in _update_dataloader_iter

dataloader_iter = enumerate(data_fetcher, batch_idx)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/utilities/fetching.py", line 199, in __iter__

self.prefetching(self.prefetch_batches)

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/utilities/fetching.py", line 258, in prefetching

self._fetch_next_batch()

File "/opt/conda/lib/python3.8/site-packages/pytorch_lightning/utilities/fetching.py", line 300, in _fetch_next_batch

batch = next(self.dataloader_iter)

File "/opt/conda/lib/python3.8/site-packages/torch/utils/data/dataloader.py", line 521, in __next__

data = self._next_data()

File "/opt/conda/lib/python3.8/site-packages/torch/utils/data/dataloader.py", line 1203, in _next_data

return self._process_data(data)

File "/opt/conda/lib/python3.8/site-packages/torch/utils/data/dataloader.py", line 1229, in _process_data

data.reraise()

File "/opt/conda/lib/python3.8/site-packages/torch/_utils.py", line 438, in reraise

raise exception

omegaconf.errors.ConfigKeyError: Caught ConfigKeyError in DataLoader worker process 0.

Original Traceback (most recent call last):

File "/opt/conda/lib/python3.8/site-packages/torch/utils/data/_utils/worker.py", line 287, in _worker_loop

data = fetcher.fetch(index)

File "/opt/conda/lib/python3.8/site-packages/torch/utils/data/_utils/fetch.py", line 49, in fetch

data = [self.dataset[idx] for idx in possibly_batched_index]

File "/opt/conda/lib/python3.8/site-packages/torch/utils/data/_utils/fetch.py", line 49, in <listcomp>

data = [self.dataset[idx] for idx in possibly_batched_index]

File "/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/action_recognition/dataloader/ar_dataset.py", line 239, in __getitem__

File "/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/action_recognition/dataloader/ar_dataset.py", line 195, in get_frames

File "/opt/conda/lib/python3.8/site-packages/omegaconf/dictconfig.py", line 369, in __getitem__

self._format_and_raise(

File "/opt/conda/lib/python3.8/site-packages/omegaconf/base.py", line 190, in _format_and_raise

format_and_raise(

File "/opt/conda/lib/python3.8/site-packages/omegaconf/_utils.py", line 741, in format_and_raise

_raise(ex, cause)

File "/opt/conda/lib/python3.8/site-packages/omegaconf/_utils.py", line 719, in _raise

raise ex.with_traceback(sys.exc_info()[2]) # set end OC_CAUSE=1 for full backtrace

File "/opt/conda/lib/python3.8/site-packages/omegaconf/dictconfig.py", line 367, in __getitem__

return self._get_impl(key=key, default_value=_DEFAULT_MARKER_)

File "/opt/conda/lib/python3.8/site-packages/omegaconf/dictconfig.py", line 438, in _get_impl

node = self._get_node(key=key, throw_on_missing_key=True)

File "/opt/conda/lib/python3.8/site-packages/omegaconf/dictconfig.py", line 465, in _get_node

self._validate_get(key)

File "/opt/conda/lib/python3.8/site-packages/omegaconf/dictconfig.py", line 166, in _validate_get

self._format_and_raise(

File "/opt/conda/lib/python3.8/site-packages/omegaconf/base.py", line 190, in _format_and_raise

format_and_raise(

File "/opt/conda/lib/python3.8/site-packages/omegaconf/_utils.py", line 821, in format_and_raise

_raise(ex, cause)

File "/opt/conda/lib/python3.8/site-packages/omegaconf/_utils.py", line 719, in _raise

raise ex.with_traceback(sys.exc_info()[2]) # set end OC_CAUSE=1 for full backtrace

omegaconf.errors.ConfigKeyError: Key 'fall_floor' is not in struct

full_key: dataset_config.label_map.fall_floor

reference_type=Optional[Dict[str, int]]

object_type=dict

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/action_recognition/scripts/train.py", line 76, in <module>

File "/home/jenkins/agent/workspace/tlt-pytorch-main-nightly/cv/super_resolution/scripts/configs/hydra_runner.py", line 99, in wrapper

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 367, in _run_hydra

run_and_report(

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 251, in run_and_report

assert mdl is not None

AssertionError

2022-09-21 11:28:04,905 [INFO] tlt.components.docker_handler.docker_handler: Stopping container.