Im new into jetson nano. Ive succesfully built an app using my custom model in jetson nano but using tensorflow to get the output (to be precise i using tflite interpreter, because my model is for android initially, but i also have the .pb format)

So, what i wanted to do is to use my model but run it using tensorrt, is it possible? or do i have to retrained model from scratch in nano?

Sorry for the late response, is this still an issue to support? Thanks

thank you for the response. Yes, it still is

Hi,

You don’t need to retrain it on the Nano.

Especially Jetson is an embedded system that is not suitable for training.

Below is an example to run a TensorFlow model on Nano with TensorRT.

Please follow similar procedures to deploy your model:

Thanks.

Thank you very much!

Also, is there any sample for image classification model such as mobilenet?

Hi,

The flow for the classification model will be simpler.

After converting the model into ONNX format, please follow the steps below to run it with TensorRT:

Thanks.

Ive been trying to install TFOD API which apparently needs tensorflow-addons and tensorflow-text. I followed the solutions from:

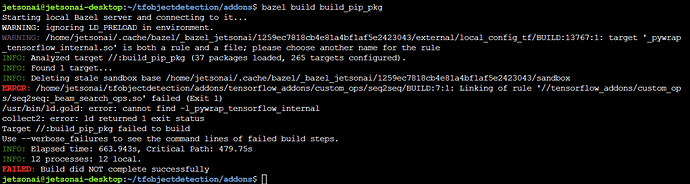

But the build is failed,

for tensorflow addons :

for tensorflow text:

Ive also tried the efficientdet python samples (i also use efficientdet network) but have problem with out of memory during model optimization, Im using 2gb Nano.

Sorry for the barrage of question.

But I have a problem with when converting my custom image classification model to onnx

The problem is same as:

as it havent solved yet there.

There is no update from you for a period, assuming this is not an issue any more.

Hence we are closing this topic. If need further support, please open a new one.

Thanks

Hi,

Could you share which TensorFlow and tf2onnx version is used?

Thanks.

This topic was automatically closed 14 days after the last reply. New replies are no longer allowed.