I tried running the following code on Jetson Xavier NX (L4T 35.1.0, Pytorch 1.12.0):

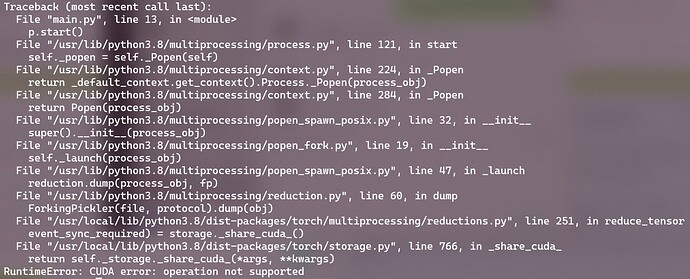

And got the following error:

I am wondering if this is related to the fact that CUDA IPC is not supported on Jetson (Decoupling loading from inference models on multiprocessing), and whether this limitation is from software (e.g., CUDA driver) or hardware.

Thanks in advance.

Hi,

Did you install a PyTorch package that has enabled CUDA support?

It’s recommended to use our prebuilt listed below:

Thanks.

Yes, I installed the prebuilt PyTorch from here “https://developer.download.nvidia.cn/compute/redist/jp/v502/pytorch/torch-1.13.0a0+d0d6b1f2.nv22.09-cp38-cp38-linux_aarch64.whl”.

torch.cuda.is_available() gives true, and simple test with adding two cuda tensors also works. Things only goes wrong when spawning with cuda tensors.

I tried both 1.12.0 and 1.13.0 (both are prebuilt PyTorch), same problem.

Hi,

AFAIK, PyTorch implements multiprocessing with CUDA IPC.

However, on Jetson, CUDA IPC is not supported.

Thanks.

Thanks a lot for your reply. Is this not yet implemented in the driver or a hardware limit? Will it be supported on Jetson NX in the future?

Hi,

CUDA IPC is only available on the dGPU environment.

For Jetson, which is an iGPU device, please use NvSCI for inter-process communication.

If PyTorch adds the NvSCI support, then the multiprocessing feature can work on Jetson.

Thanks.

2 Likes

system

Closed

10

This topic was automatically closed 14 days after the last reply. New replies are no longer allowed.