I may or may not have information that help. I encountered this problem in a completely different context. The Uni_PC sampler for Comfyui image generation threw this. So here is the error and then my solution:

Traceback (most recent call last):

File “ComfyUI/execution.py”, line 496, in execute

output_data, output_ui, has_subgraph, has_pending_tasks = await get_output_data(prompt_id, unique_id, obj, input_data_all, execution_block_cb=execution_block_cb, pre_execute_cb=pre_execute_cb, hidden_inputs=hidden_inputs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/execution.py”, line 315, in get_output_data

return_values = await _async_map_node_over_list(prompt_id, unique_id, obj, input_data_all, obj.FUNCTION, allow_interrupt=True, execution_block_cb=execution_block_cb, pre_execute_cb=pre_execute_cb, hidden_inputs=hidden_inputs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/execution.py”, line 289, in _async_map_node_over_list

await process_inputs(input_dict, i)

File “ComfyUI/execution.py”, line 277, in process_inputs

result = f(**inputs)

^^^^^^^^^^^

File “ComfyUI/nodes.py”, line 1521, in sample

return common_ksampler(model, seed, steps, cfg, sampler_name, scheduler, positive, negative, latent_image, denoise=denoise)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/nodes.py”, line 1488, in common_ksampler

samples = comfy.sample.sample(model, noise, steps, cfg, sampler_name, scheduler, positive, negative, latent_image,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/sample.py”, line 45, in sample

samples = sampler.sample(noise, positive, negative, cfg=cfg, latent_image=latent_image, start_step=start_step, last_step=last_step, force_full_denoise=force_full_denoise, denoise_mask=noise_mask, sigmas=sigmas, callback=callback, disable_pbar=disable_pbar, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/samplers.py”, line 1143, in sample

return sample(self.model, noise, positive, negative, cfg, self.device, sampler, sigmas, self.model_options, latent_image=latent_image, denoise_mask=denoise_mask, callback=callback, disable_pbar=disable_pbar, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/samplers.py”, line 1033, in sample

return cfg_guider.sample(noise, latent_image, sampler, sigmas, denoise_mask, callback, disable_pbar, seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/samplers.py”, line 1018, in sample

output = executor.execute(noise, latent_image, sampler, sigmas, denoise_mask, callback, disable_pbar, seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/patcher_extension.py”, line 111, in execute

return self.original(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/samplers.py”, line 986, in outer_sample

output = self.inner_sample(noise, latent_image, device, sampler, sigmas, denoise_mask, callback, disable_pbar, seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/samplers.py”, line 969, in inner_sample

samples = executor.execute(self, sigmas, extra_args, callback, noise, latent_image, denoise_mask, disable_pbar)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/patcher_extension.py”, line 111, in execute

return self.original(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/samplers.py”, line 748, in sample

samples = self.sampler_function(model_k, noise, sigmas, extra_args=extra_args, callback=k_callback, disable=disable_pbar, **self.extra_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/extra_samplers/uni_pc.py”, line 868, in sample_unipc

x = uni_pc.sample(noise, timesteps=timesteps, skip_type=“time_uniform”, method=“multistep”, order=order, lower_order_final=True, callback=callback, disable_pbar=disable)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/extra_samplers/uni_pc.py”, line 722, in sample

x, model_x = self.multistep_uni_pc_update(x, model_prev_list, t_prev_list, vec_t, init_order, use_corrector=True)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/extra_samplers/uni_pc.py”, line 472, in multistep_uni_pc_update

return self.multistep_uni_pc_bh_update(x, model_prev_list, t_prev_list, t, order, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “ComfyUI/comfy/extra_samplers/uni_pc.py”, line 653, in multistep_uni_pc_bh_update

rhos_c = torch.linalg.solve(R, b)

^^^^^^^^^^^^^^^^^^^^^^^^

RuntimeError: Error in dlopen: ComfyUI/comfy-env/lib/python3.11/site-packages/torch/lib/libtorch_cuda_linalg.so: undefined symbol: cusolverDnXsyevBatched_bufferSize, version libcusolver.so.11

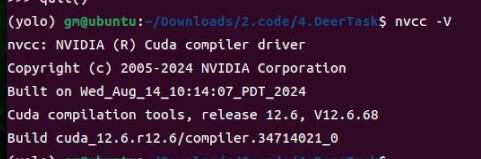

I wondered why my torch 2.7.0 with cuda 12.9 would use a library that sounds like from cuda 11 so I searched for other cuda-related packages in my environment and found cupy-cuda11x. I removed it to see which package depends on it and go from there and the problem went away. To my surprise it actually did not get properly removed but updated from 12.3.0 to 13.0.0. So I think these are possible explanations:

→ cupy-cuda11x 12.3.0 makes pytorch search for the wrong symbol for reasons I cannot begin to understand and updating to 13.0.0 helps

→ OR removing the package solved a situation where other packages overshadowed the proper packages in my conda environment. My pip is configured correctly though and the PATH looks good.

→ Maybe it was not a wrong link out of the conda environment but the package got updated without me seeing it in any log.

Hope you can take away anything from this.