TX1 reboot -f system failed。 and it must Disconnect the power supply.

find "kworker"service cost 100% CPU usage .

It does look like there is an error with “reboot -f”…sometimes. I haven’t found what triggers/reproduces this.

Note: You can check “uptime” to see if the system truly rebooted, or if it just dumped logins.

Initially I had “reboot -f” failing just like what you described, but for whatever reason after a normal “shutdown -r now” and normal “reboot” (no “-f”) “reboot -f” started working. It may be related to cold boot versus warm boot…I don’t know, I wasn’t able to make it show up again (the TK1 was unplugged for a couple of days before the initial test). I saw no dmesg, and once I got past this via normal reboots I couldn’t get it to occur again.

FYI, “shutdown -h now” or “shutdown -r now” does always work as expected even when “reboot -f” fails. Ordinary “reboot” (without “-f”) also always works. I would guess the first question is why you need “reboot -f”? Has something gone wrong on shutdown which makes you want to use this, or are you just experimenting? Knowing what is prompting the use of this might provide clues as to what was causing it. I do not see the 100% CPU use so I can’t reproduce this after its first occurrence.

You might try htop instead of top…the CPU usage chart will probably show 100% is for only a single core, not all cores. You can also go to a tree view in htop which would allow you to look for child/parent thread dependencies. Finding more information on that specific ksoftirq might help.

FYI, drivers can be split to be able to make part of the driver more “modular” such that it can migrate that part of the driver across cores (it’s an efficient way to design drivers…execute what is mandatory, and make the rest of it able to offload to a new core or later time on the same core). That separate/modular part of the driver is run based on software interrupts and ksoftirqd, so if any core other than CPU0 is locked there should be no fatal interference with “reboot -f” (CPU0 handles hardware IRQs or software IRQs, all other cores can handle software interrupts but not hardware interrupts). If part of init scripts depend on an orderly shutdown of something blocked by a locked software driver thread, then it might cause shutdown by normal methods to fail while waiting forever (or at least wating for a long timeout). In theory, using “reboot -f” should ignore init waiting for a shutdown when software is blocked, but won’t be of any use in CPU0 lockups…this would be outside of init in kernel space possibly blocking hardware access. Reworded, this says ksoftirqd shouldn’t be a problem for “reboot -f” unless it or a parent of it is blocking access to CPU0…ksoftirq may have nothing to do with CPU0 lock, but a parent which started this ksoftirq might (it’s hard to say since I can’t seem to trigger the fault after the initial occurrence). If you can still reproduce this (I could at first, but not now) and find the root of the 100% CPU use via the tree view of htop it could help.

The htop command was not found on the TX1。

and , “kworker” is filesystem error?

sudo apt-get install htop

kworker is a normal product of drivers as a system becomes loaded down. A kworker locking up and denying a CPU core is not normal. Locking CPU core 0 is probably fatal.

thank you very much.

Any information you might find on kworker process which seems at fault (including any parent or child, and information on which core is stuck at 100%) would be helpful.

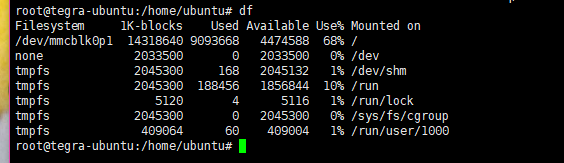

step 1、Once this process “kworker” CPU is high, SSH is OK when enter other commands.

but send cmd "reboot -f ", this SSH is blocking.

step 2、create new ssh connection, cmd "ps -aux | grep reboot ", find the cmd "reboot -f " is blocking.

step 3、this TX1 must Back to electricity.

I did reproduce this for a moment early on, but then not after. It makes it hard to debug since I can’t make this occur during testing (I know the issue is there since I saw it once).

Since you can make this occur again there are a couple of tools you can use to get more information. One is strace, the other is forcing a kernel OOPS with sysrq.

strace:

strace shows system calls reaching the kernel from a user space process. This is a lot of output in a very short time, but could be very useful. Normally one would start a program with strace to get logs from the start, e.g., something like this

sudo -s

strace -oTraceLog.txt reboot -f

One can also attach strace to a running process ID (this allows you to not log until the problem is hit…it can save very large log files…you can also strace without “-oLogFile.txt” option to just see output on console and not fill up the file system). Logging right from the start probably has too much output if it takes time to reach the problem. If you attach strace to a PID though after you know the issue has hit, and then kill the strace after a few seconds, then you’ll probably get a repeating pattern in the log. So perhaps you could look at the PID from your second ssh connection, and get a log something like this (substitute the actual PID for “”):

sudo -s

strace -p <pid>

# ...wait a few seconds...

# "control-c" to terminate the trace...

sync

# ...whatever you need for reboot...

This log will be most interesting if instead of constant polling we see the log ends with a wait. Even if it is polling we should see what it is skipping over, but the log will be much bigger.

Magic SysRq:

“Magic sysrq” keys are quite interesting for situations like this. Basically there is a part of the kernel still running even when much of the system is dead, and if this part sees certain events, then it’ll do what it’s programmed to do. One way of producing the events is with keystrokes, the other way is to echo the equivalent to “/proc/sysrq-trigger” as user root.

From a keyboard directly attached you’ll find CTRL-SYSRQ- does the trick. The sysrq key is the same as the prtscn key typically in the upper right near the enter key. If you watch “dmesg --follow” somewhere, e.g., on ssh or serial console, and then at the directly attached keyboard type ctrl-sysrq-c, then you will see a kernel crash dump.

The alternative is from a serial console or from an ssh connection:

sudo echo c > /proc/sysrq-trigger

You can then copy the dmesg log…or better yet, if you were using serial console, just log from the console. You’ll be able to show what the CPUs were up to during the reboot failure.

The “c” key is just one possibility. A backtrace on each core will be shown if you use “l”. Either a local keyboard with CTRL-SYSRQ-l, or:

sudo echo l > /proc/sysrq.trigger

(this will be very similar to the crash dump of “c”)

Not all sysrq combinations apply to all architectures. Even if a sysrq applies to an architecture it might still require a different kernel config to use it. By default sysrq is enabled on Jetsons, and pretty much every PC version has at least some subset enabled by default (you can change configurations to allow or deny sysrq). You can see a list of sysrq keys here:

https://en.wikipedia.org/wiki/Magic_SysRq_key

If you can attach any log which might show the “c” crash dump or backtrace, along with what strace might show while the CPU seems locked. I can’t get my Jetson to do this a second time, so since you have a repeatable condition you might be able to get some good information.

It isn’t definitive, but the htop screenshot tends to imply compiz is locking one core. There is a known crash of compiz on logout where a kernel error message is posted, but normally this has no effect. I’m thinking that perhaps it is possible that the reboot command may sometimes be locked if compiz crashes on the first core at shutdown…but that is just speculation about locking on to the core and not releasing it.

kworker is essentially the thread to schedule software-only parts of drivers under load. Seeing the second png with this at 100% does not preclude compiz from being what is executing at the moment despite showing as kworker (this could be the deferred software portion of the compiz driver as scheduled under kworker). If you had a screenshot of this occurring under htop while htop is in tree view (see the menu at the bottom of htop…“F5” key toggles tree versus sorted view), then we might be able to definitively link kworker to compiz…or of course we might be able to rule it out. If you get a chance to run htop again while this happens try to look at the tree view.

It isn’t a “smoking gun”, but it certain looks like the logout crash bug for compiz might at times cause the 100% kworker thread stopping “reboot-f” from working. If so, then you’ve demonstrated the first case of compiz logout crash bug not being harmless.

If the problem(bug) happened , how can I restart the device remotely(by ssh)?

because I have 40 sets of TX1 in The data center, and I have to drive to the data center, Back to electricity.

I suspect that if you still have ssh access you can use “sudo shutdown -r now” to reboot. If you don’t have a working ssh, then you are probably out of luck. Even when I had the “reboot-f” fail, if I had ssh or console access I found “shutdown -r now” still worked.

If that doesn’t work then perhaps try:

sudo -s

echo s > /proc/sysrq-trigger

echo u > /proc/sysrq-trigger

echo b > /proc/sysrq-trigger