Seems like there was an auto update today that replaced my custom kernel…

So I did a “sync_sources” and rebuilt the kernel.

then i tried flashing the kernel with “sudo ./flash.sh -k LNX jetson-agx-xavier-devkit mmcblk0p1”

This had an error… then I tried bootloader “sudo ./flash.sh -k EBT jetson-agx-xavier-devkit mmcblk0p1”

That produced the same error… see below…

Any help would REALLY be appreciated as right now my Xavier is dead in the water without custom kernel and I REALLY dont want to re-flash the entire thing from scratch again…

[ 10.0993 ] tegrarcm_v2 --boot recovery

[ 10.1019 ] Applet version 01.00.0000

[ 10.4696 ]

[ 11.4738 ] tegrarcm_v2 --isapplet

[ 12.2036 ]

[ 12.2044 ] tegrarcm_v2 --ismb2

[ 12.5494 ]

[ 12.5525 ] tegradevflash_v2 --iscpubl

[ 12.5552 ] Bootloader version 01.00.0000

[ 12.7389 ] Bootloader version 01.00.0000

[ 12.7402 ]

[ 12.7403 ] Writing partition

[ 12.7411 ] tegradevflash_v2 --write EBT 1_nvtboot_cpu_t194_sigheader.bin.encrypt

[ 12.7416 ] Bootloader version 01.00.0000

[ 13.2077 ] Writing partition EBT with 1_nvtboot_cpu_t194_sigheader.bin.encrypt

[ 13.2079 ] 000000000d0d000d: o open partition %s.

[ 13.2094 ]

[ 13.2094 ]

Error: Return value 13

Command tegradevflash_v2 --write EBT 1_nvtboot_cpu_t194_sigheader.bin.encrypt

Failed to flash/read t186ref.

hello koosdupreez,

that’s incorrect, you should update kernel partition to re-flash kernel images.

you may refer to flash configure files to check the actual partition names.

for example,

<partition name="kernel" type="data" oem_sign="true">

<allocation_policy> sequential </allocation_policy>

<filesystem_type> basic </filesystem_type>

<size> LNXSIZE </size>

<file_system_attribute> 0 </file_system_attribute>

<allocation_attribute> 8 </allocation_attribute>

<percent_reserved> 0 </percent_reserved>

<filename> LNXFILE </filename>

Hi Jerry - I dont follow.

the flash.sh file says:

# ./flash.sh -k LNX <target_board> mmcblk1p1 - update <target_board> kernel

I’m not booting from an SD, so i changed it to mmcblk0p1 I assume you mean i also have to change LNX to the partition ID…

I’ll try that.

BTW - I have previously just replaced the kernal Image file directly in /boot , but i saw there is now .sig signature files, so I am assuming I had to fo via tha flash.sh route to get the new kernel on there.

If I can simply just copy the Image and .sig files over, that would work too ?

thanks for your guidance.

OK - I tried sudo ./flash.sh -k kernel jetson-agx-xavier-devkit mmcblk0p1

is says success, but the kernel was never updated.

[ 14.0424 ] Bootloader version 01.00.0000

[ 14.2504 ] Writing partition kernel with 1_boot_sigheader.img.encrypt

[ 14.2519 ] [................................................] 100%

[ 16.0981 ]

[ 16.0984 ] Coldbooting the device

[ 16.1013 ] tegrarcm_v2 --ismb2

[ 16.4458 ]

[ 16.4490 ] tegradevflash_v2 --reboot coldboot

[ 16.4515 ] Bootloader version 01.00.0000

[ 16.5904 ]

*** The [kernel] has been updated successfully. ***

But its still the one from 2/19 ???

-rw-r--r-- 1 root root 34338824 Feb 19 08:50 Image

-rw-r--r-- 1 root root 4096 Feb 19 12:34 Image.sig

hello koosdupreez,

there’re two ways to update kernel image,

(1) you may overwrite /boot/Image on your target, it’s unnecessary to sign and encrypt this binary. note, it’s CBoot functionality to control Kernel Boot Sequence Using extlinux.conf, it’ll load the kernel binary file from the LINUX entry.

(2) kernel binary will be loaded through kernel partition if you’re not having LINUX entry in extlinux.conf configuration file, this should be sign and encrypt and it has done with the flash script file.

Hi again Jerry

Sorry - that doesnt work…

when I rebuild the kernel and just replace the Image, i get all kinds of errors on boot screen, then it just goes blank… and no graphical user interface… seems like modules wont load…

When I revert back to original signed Image everything works fine…

should I update modules or dtb’s as well ?

Here is one of the errors… the other was could not load nvpmmodel

Keep in mind kernel modules failing to load is not a result of flashing. This is a result of not configuring the new kernel.

When you run the command “uname -r” it produces an answer based on the kernel source version and the CONFIG_LOCALVERSION which was set at the time of kernel build. Jetsons have a default:

CONFIG_LOCALVERSION="-tegra"

In a 4.9.140 kernel this would result in a uname -r of “4.9.140-tegra”. If you failed to set CONFIG_LOCALVERSION, then it would instead produce “4.9.140”.

The reason this matters is that a kernel Image searches for modules at “/lib/modules/$(uname -r)/kernel/”. Should “uname -r” change, then your kernel will no longer find its modules unless you’ve also installed all of the modules at the new “/lib/modules/$(uname -r)/kernel/”. The flash process has no knowledge of your kernel configuration.

1 Like

That was it!

I set the local version and appended a string to identify custom kernel…

when i removed that and made it simply “-tegra” it works great!!

One last issue is, it seems there might be a bug in the makefile?

I get this gcc error

gcc: error: unrecognized command line option ‘-mlittle-endian’; did you mean ‘-fconvert=little-endian’?

What is your exact command line? If you have some cross compile flags in it when not used, or vice versa, then this could do that. Basically mistaken architecture…which is what native versus cross compile is about.

I run these two make commands

make ARCH=arm64 O=$TEGRA_KERNEL_OUT tegra_defconfig

make ARCH=arm64 O=$TEGRA_KERNEL_OUT -j12

where $TEGRA_KERNEL_OUT is env var pointing to my output path.

and tegra_defconfig is the standard config file that shipped with Jetpack4.5, i did not modify it Linux_for_Tegra/sources/kernel/kernel-4.9/arch/arm64/configs/tegra_defconfig

This would fail regardless of cross compile or native compile. If native compile you need to leave out the “ARCH=arm64”. If cross compile, then you need to additionally specify the toolchain (the “CROSS_COMPILE=/some/tool/chain/location/bin/...”).

CROSS_COMPILE is already exported and points to my toolchain earlier in my build script.

export CROSS_COMPILE="${1}/bin/aarch64-linux-gnu-" where $1 is passed in as a param to my script of where toolchain is installed.

Then this should work.

However, if there is a mistake on big/little endian, then something is really wrong with the compile options or arguments. I don’t know what the script you use does, but it seems like there is some sort of incorrect big/little endian setup (which I would normally attribute to not having cross compile arguments correct).

FYI, people can set up arm64 for either big or little endian (in hardware) when designing their CPU, but the default (and the one NVIDIA uses) is little endian. That error tends to imply that either the build does not know which endian, or else it is using big endian and the hardware wants little endian. On the Jetson, see:

lscpu

On the kernel module itself, assuming name is “module.ko”, then what do you see from:

readelf -h module.ko

My script doesnt do much beyond exporting CROSS_COMPILE and then calling

make ARCH=arm64 O=$TEGRA_KERNEL_OUT tegra_defconfig

make ARCH=arm64 O=$TEGRA_KERNEL_OUT -j12

and to build modules:

make ARCH=arm64 O=$TEGRA_KERNEL_OUT modules_install INSTALL_MOD_PATH=$INSTALL_MOD_PATH

this is readelf output from one of my modules.

ELF Header:

Magic: 7f 45 4c 46 02 01 01 00 00 00 00 00 00 00 00 00

Class: ELF64

Data: 2's complement, little endian

Version: 1 (current)

OS/ABI: UNIX - System V

ABI Version: 0

Type: REL (Relocatable file)

Machine: AArch64

Version: 0x1

Entry point address: 0x0

Start of program headers: 0 (bytes into file)

Start of section headers: 399856 (bytes into file)

Flags: 0x0

Size of this header: 64 (bytes)

Size of program headers: 0 (bytes)

And incase you were interrested, this is the entire script to building kernel and moduls and prepare the kernel for flashing via flash.h (i do a prepare_binaries prior to flashing)

#!/bin/bash

export TOP=/data/software/NVidia/JetPack_4.5_Linux_JETSON_AGX_XAVIER

export TEGRA_KERNEL_OUT=$TOP/Linux_for_Tegra/sources/kernel/kernel-4.9-out

export CROSS_COMPILE=/data/software/NVidia/l4t-gcc/gcc-linaro-7.3.1-2018.05-x86_64_aarch64-linux-gnu/bin/aarch64-linux-gnu-

export LOCALVERSION=-tegra

export INSTALL_MOD_PATH=$TOP/Linux_for_Tegra/rootfs

echo -e "\nBacking Up Old Kernel Image and DTB"

tar -zcvf $TOP/backups/Backup-Kernel-Image.tar.gz $TOP/Linux_for_Tegra/kernel/Image* > /dev/null

tar -zcvf $TOP/backups/Backup-Kernel-dtb.tar.gz $TOP/Linux_for_Tegra/kernel/dtb/* > /dev/null

echo -e "\nBacking Up Old Modules"

tar -zcvf $TOP/backups/Backup-Kernel-Modules.tar.gz $TOP/Linux_for_Tegra/rootfs/lib/modules/* > /dev/null

echo -e "\nBacking Up Old kernel_supplements.tbz2"

cp $TOP/Linux_for_Tegra/kernel/kernel_supplements.tbz2 $TOP/backups/

echo -e "\nBuilding Kernel"

make ARCH=arm64 O=$TEGRA_KERNEL_OUT tegra_defconfig

make ARCH=arm64 O=$TEGRA_KERNEL_OUT -j12

echo -e "\nReplacing Kernel Image and DTB"

cp -r $TEGRA_KERNEL_OUT/arch/arm64/boot/Image* $TOP/Linux_for_Tegra/kernel

cp -r $TEGRA_KERNEL_OUT/arch/arm64/boot/dts/* $TOP/Linux_for_Tegra/kernel/dtb/

echo -e "\nInstaling Kernel Modules"

sudo make ARCH=arm64 O=$TEGRA_KERNEL_OUT modules_install INSTALL_MOD_PATH=$INSTALL_MOD_PATH

echo -e "Creating kernel_supplements.tbz2"

cd $INSTALL_MOD_PATH

sudo tar --owner root --group root -cjf kernel_supplements.tbz2 lib/modules

sudo mv kernel_supplements.tbz2 $TOP/Linux_for_Tegra/kernel/

sudo chown nvidia:nvidia $TOP/Linux_for_Tegra/kernel/kernel_supplements.tbz2

sudo chmod 664 $TOP/Linux_for_Tegra/kernel/kernel_supplements.tbz2

The script does seem basically correct. The readelf output shows this is the correct architecture and endian. So I am at a bit of a loss why you see:

When something somewhat bizarre like this occurs I generally wonder first if it is either something in the configuration itself triggering a feature not normally built, such that if that feature needed a bug fix for the architecture, that this would show up now (but not normally since most people would not use that feature). The second thought is that the compiler release version may have some sort of options changed.

As for the second possibility, I didn’t see explicit setting or export of the exact CROSS_COMPILE path (though you did specify this was done and set to “${1}/bin/aarch64-linux-gnu-”), which would specify the cross compiler. Let’s assume that the “${1}” is correctly set up…what do you see from:

${1}/bin/aarch64-linux-gnu-gcc --version

I am curious if that version might have some changes not accounted for. Also, where did you get the cross tools? Was this from the ones provided by NVIDIA, or via apt-get and part of Ubuntu, so on?

aarch64-linux-gnu-gcc (Linaro GCC 7.3-2018.05) 7.3.1 20180425 [linaro-7.3-2018.05 revision d29120a424ecfbc167ef90065c0eeb7f91977701]

Copyright (C) 2017 Free Software Foundation, Inc.

This is free software; see the source for copying conditions. There is NO

warranty; not even for MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE.

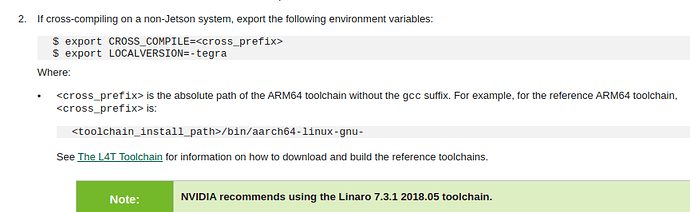

oh - and I got it from following this in the nvidia documentation:

https://docs.nvidia.com/jetson/l4t/index.html#page/Tegra%20Linux%20Driver%20Package%20Development%20Guide/kernel_custom.html

This in turn points to toolchain download at:

http://releases.linaro.org/components/toolchain/binaries/7.3-2018.05/aarch64-linux-gnu/gcc-linaro-7.3.1-2018.05-x86_64_aarch64-linux-gnu.tar.xz

So the toolchain itself is a known good chain. Although the script could still have some odd quirk we don’t know about, I’m thinking now that there might be some configuration detail which differs from the default, and perhaps this issue only shows up with that config.

It would be hard to say for sure without sitting down at the computer, running manual builds, and trying adding any config change in one part at a time, so on. I will say that I think your system is capable of correct build if the environment and config are valid. I just don’t know what to look at next without a full log of a kernel build, and not just the failure line.

I will suggest that if you run make with the target “Image”, and it fails, then if you’ve used multiple CPU cores (like “-j12”), then running this multiple times will complete all build items which can complete, and then a final attempt to build would provide a shorter build log with possibly useful information…but we’d want the full log and not just the one message.