Dear NVidia,

We are 1C Games Studios - developers of the IL-2 Sturmovik gaming aviation simulator. Now we’re working on the new iteration of our graphics engine for our next project. One of the major changes in it - is the migration to the DirectX 12 API. We have met several issues this way and one of them - we can’t pass without your assistance while it is hardware dependent. So we ask for your assistance or advice.

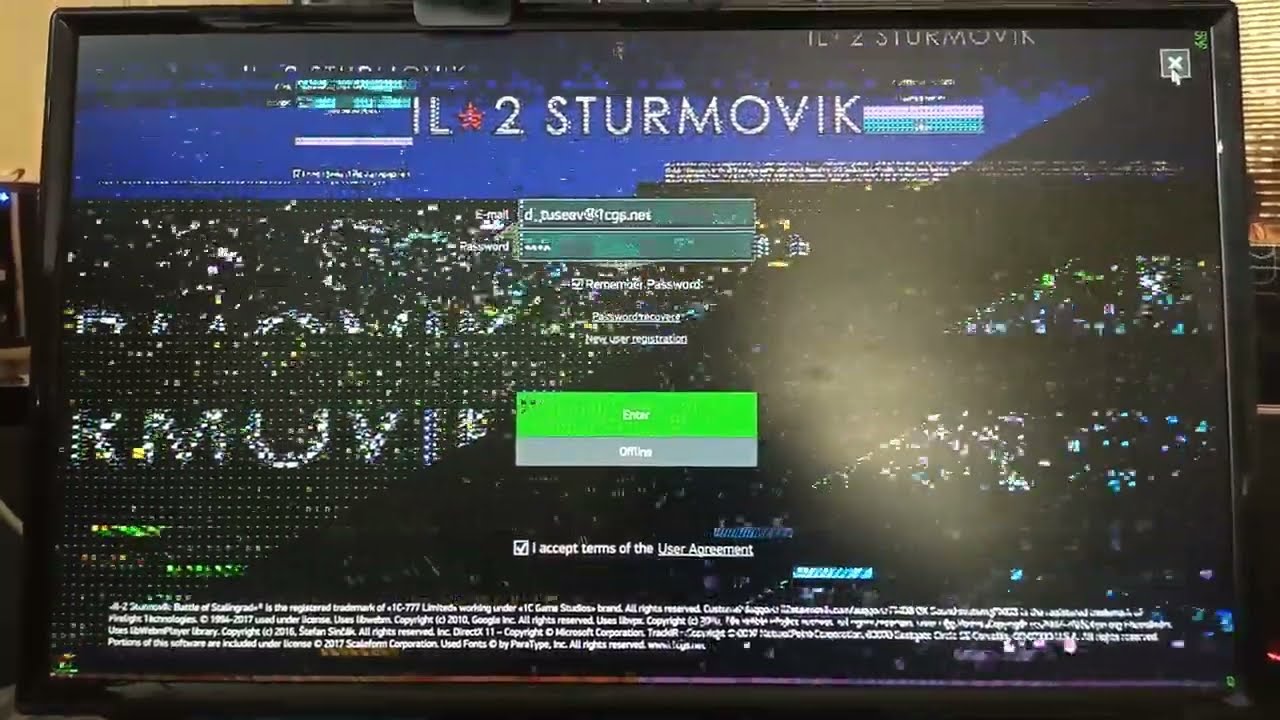

Issue is that if video memory is severely in-use (by internet browser for instance) in the moment before game start - we receive major graphical artefacts in the game (look for the video by the link below).

Observations and notes:

- We were unable to reproduce this issue on a 2080 12GB video card.

- 1080 8GB video card reproduces the issue if video memory was almost full before game start.

- 1060 3GB video card reproduces the issue if video memory is used above 50% before game start.

- We were unable to reproduce this issue with ATI videocards (older and newer).

- Talking abound discrete/NUMA NVidia adapters, we allocate our resources in several DEFAULT heaps until consumed physical Video Memory (up to DXGI_QUERY_VIDEO_MEMORY_INFO::Budget) if we encounter limit of VRAM we allocate heaps in shared L0 memory. However this is not related to described before issue as we are running on low settings wich is adequate to on-board VRAM.

- We mostly tested this in windowed mode, 1920x1080.

- After exiting the game we see that that browser is pushed out of video memory anyway.

- DirectX debug does not provide any errors when artefacts appear, like it’s everything ok for it.

So please assist us in solving this issue please. At the moment we’re out of ideas what we should change to avoid those artefacts on NVidia videocards.

With Regards

Daniel Tuseyev

IL-2 Producer