my python shell to send record request as followed:

from kafka import KafkaProducer

from kafka.errors import kafka_errors

import json

import datetime

import time

producer =KafkaProducer(bootstrap_servers='*********')

topic = "nx"

count=1

while count<3:

d1 = datetime.datetime.utcnow()

d2 = datetime.datetime.utcnow()-datetime.timedelta(seconds=10*count)

d1string = d1.isoformat("T")[:-3] +"Z"

d2string = d2.isoformat("T")[:-3] +"Z"

d = {"command": "start-recording","start": d2string,"end":d1string,"sensor": {"id": str(count)}}

print(d)

data = json.dumps(d)

future = producer.send(topic,key='count_num'.encode('utf-8'),value=data.encode('utf-8'),partition=0)

try:

future.get(timeout=10)

except kafka_errors:

traceback.format_exc()

count +=1

time.sleep(10)

And we receive the errors like:

Our deepstream-test5-app config is

[application]

enable-perf-measurement=1

perf-measurement-interval-sec=5

#gie-kitti-output-dir=streamscl

[tiled-display]

enable=0

rows=2

columns=2

width=1280

height=720

gpu-id=0

#(0): nvbuf-mem-default - Default memory allocated, specific to particular platform

#(1): nvbuf-mem-cuda-pinned - Allocate Pinned/Host cuda memory, applicable for Tesla

#(2): nvbuf-mem-cuda-device - Allocate Device cuda memory, applicable for Tesla

#(3): nvbuf-mem-cuda-unified - Allocate Unified cuda memory, applicable for Tesla

#(4): nvbuf-mem-surface-array - Allocate Surface Array memory, applicable for Jetson

nvbuf-memory-type=0

[source0]

enable=1

#Type - 1=CameraV4L2 2=URI 3=MultiURI

type=4

uri=rtsp://****

num-sources=1

gpu-id=0

nvbuf-memory-type=0

drop-frame-interval=0

smart-record=1

# 0 = mp4, 1 = mkv

smart-rec-container=0

smart-rec-file-prefix=a

smart-rec-dir-path=../../../../../sources/apps/sample_apps/deepstream-test-sutpc/configs

# smart record cache size in seconds

smart-rec-cache=30

smart-rec-duration=10

[source1]

enable=1

#Type - 1=CameraV4L2 2=URI 3=MultiURI

type=4

uri=rtsp://****

num-sources=1

gpu-id=0

nvbuf-memory-type=0

drop-frame-interval=0

smart-record=1

# 0 = mp4, 1 = mkv

smart-rec-container=0

smart-rec-file-prefix=a

smart-rec-dir-path=../../../../../sources/apps/sample_apps/deepstream-test-sutpc/configs

# smart record cache size in seconds

smart-rec-cache=30

smart-rec-duration=10

[source2]

enable=1

#Type - 1=CameraV4L2 2=URI 3=MultiURI

type=4

uri=rtsp://****

num-sources=1

gpu-id=0

nvbuf-memory-type=0

drop-frame-interval=0

smart-record=1

# 0 = mp4, 1 = mkv

smart-rec-container=0

smart-rec-file-prefix=a

smart-rec-dir-path=../../../../../apps/sample_apps/deepstream-test-sutpc/configs

# smart record cache size in seconds

smart-rec-cache=30

smart-rec-duration=10

[source3]

enable=1

#Type - 1=CameraV4L2 2=URI 3=MultiURI

type=4

uri=rtsp://****

num-sources=1

gpu-id=0

nvbuf-memory-type=0

drop-frame-interval=0

smart-record=1

# 0 = mp4, 1 = mkv

smart-rec-container=0

smart-rec-file-prefix=a

smart-rec-dir-path=../../../../../sources/apps/sample_apps/deepstream-test-sutpc/configs

# smart record cache size in seconds

smart-rec-cache=30

smart-rec-duration=10

[sink0]

enable=0

#Type - 1=FakeSink 2=EglSink 3=File

type=1

sync=1

source-id=0

gpu-id=0

enc-type = 1

nvbuf-memory-type=0

[sink1]

enable=0

#Type - 1=FakeSink 2=EglSink 3=File 4=UDPSink 5=nvdrmvideosink 6=MsgConvBroker

type=6

msg-conv-config=dstest5_msgconv_sample_config.txt

#(0): PAYLOAD_DEEPSTREAM - Deepstream schema payload

#(1): PAYLOAD_DEEPSTREAM_MINIMAL - Deepstream schema payload minimal

#(256): PAYLOAD_RESERVED - Reserved type

#(257): PAYLOAD_CUSTOM - Custom schema payload

msg-conv-payload-type=0

msg-broker-proto-lib=/opt/nvidia/deepstream/deepstream/lib/libnvds_kafka_proto.so

msg-broker-conn-str=******

topic=nxtest

#Optional:

#msg-broker-config=../../deepstream-test4/cfg_kafka.txt

[sink2]

enable=1

#Type - 1=FakeSink 2=EglSink 3=File 4=RTSPStreaming

type=4

#1=h264 2=h265

codec=1

#encoder type 0=Hardware 1=Software

enc-type=0

source-id=0

sync=0

bitrate=4000000

#H264 Profile - 0=Baseline 2=Main 4=High

#H265 Profile - 0=Main 1=Main10

profile=0

# set below properties in case of RTSPStreaming

rtsp-port=9091

udp-port=5400

[sink3]

enable=1

#Type - 1=FakeSink 2=EglSink 3=File 4=RTSPStreaming

type=4

source-id=1

#1=h264 2=h265

codec=1

#encoder type 0=Hardware 1=Software

enc-type=0

sync=0

bitrate=4000000

#H264 Profile - 0=Baseline 2=Main 4=High

#H265 Profile - 0=Main 1=Main10

profile=0

# set below properties in case of RTSPStreaming

rtsp-port=9090

udp-port=5401

[sink4]

enable=1

#Type - 1=FakeSink 2=EglSink 3=File 4=RTSPStreaming

type=4

source-id=2

#1=h264 2=h265

codec=1

#encoder type 0=Hardware 1=Software

enc-type=0

sync=0

bitrate=4000000

#H264 Profile - 0=Baseline 2=Main 4=High

#H265 Profile - 0=Main 1=Main10

profile=0

# set below properties in case of RTSPStreaming

rtsp-port=9092

udp-port=5402

[sink5]

enable=1

#Type - 1=FakeSink 2=EglSink 3=File 4=RTSPStreaming

type=4

source-id=3

#1=h264 2=h265

codec=1

#encoder type 0=Hardware 1=Software

enc-type=0

sync=0

bitrate=4000000

#H264 Profile - 0=Baseline 2=Main 4=High

#H265 Profile - 0=Main 1=Main10

profile=0

# set below properties in case of RTSPStreaming

rtsp-port=9093

udp-port=5403

[osd]

enable=1

gpu-id=0

border-width=1

text-size=15

text-color=1;1;1;1;

text-bg-color=0.3;0.3;0.3;1

font=Arial

show-clock=0

clock-x-offset=800

clock-y-offset=820

clock-text-size=12

clock-color=1;0;0;0

nvbuf-memory-type=0

[streammux]

gpu-id=0

##Boolean property to inform muxer that sources are live

live-source=1

batch-size=4

##time out in usec, to wait after the first buffer is available

##to push the batch even if the complete batch is not formed

batched-push-timeout=60000

## Set muxer output width and height

width=1920

height=1080

##Enable to maintain aspect ratio wrt source, and allow black borders, works

##along with width, height properties

enable-padding=0

nvbuf-memory-type=0

## If set to TRUE, system timestamp will be attached as ntp timestamp

## If set to FALSE, ntp timestamp from rtspsrc, if available, will be attached

# attach-sys-ts-as-ntp=1

[primary-gie]

enable=1

gpu-id=0

batch-size=4

## 0=FP32, 1=INT8, 2=FP16 mode

bbox-border-color0=1;0;0;1

bbox-border-color1=0;1;1;1

bbox-border-color2=0;1;1;1

bbox-border-color3=0;1;0;1

nvbuf-memory-type=0

interval=0

gie-unique-id=1

model-engine-file=../../../../../samples/models/Primary_Detector/resnet10.caffemodel_b8_gpu0_int8.engine

labelfile-path=../../../../../samples/models/Primary_Detector/labels.txt

config-file=./../../../../samples/configs/deepstream-app/config_infer_primary.txt

[tracker]

enable=1

# For NvDCF and DeepSORT tracker, tracker-width and tracker-height must be a multiple of 32, respectively

tracker-width=640

tracker-height=384

ll-lib-file=/opt/nvidia/deepstream/deepstream/lib/libnvds_nvmultiobjecttracker.so

ll-config-file=../../../../../samples/configs/deepstream-app/config_tracker_DeepSORT.yml

gpu-id=0

enable-batch-process=4

enable-past-frame=1

display-tracking-id=1

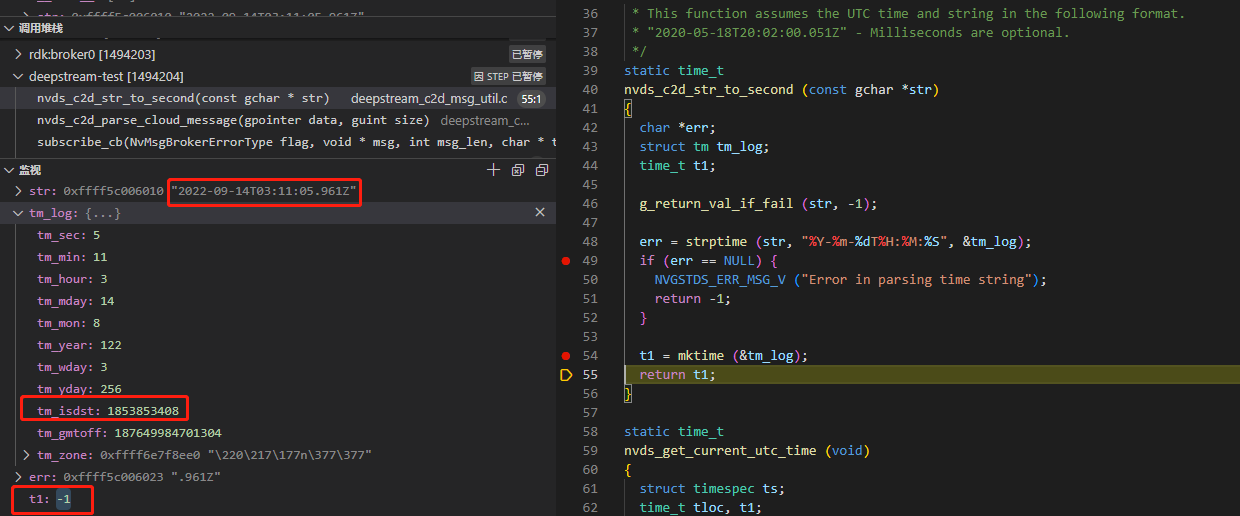

Then we debug the program by gdb, and we found nvds_c2d_str_to_second :

The

strptimecan not convert the

2022-09-14T03:11:05.961Z into

tm_log so that t1 is -1,

but when we only add

nvds_get_current_utc_time before call

nvds_c2d_str_to_second and remake

timeStr = json_object_get_string_member (object, "start");

+++ curUtc = nvds_get_current_utc_time ();

startUtc = nvds_c2d_str_to_second (timeStr);`

Everything is well. It can seem like this:

Too weird, it looks like nvds_get_current_utc_time triggers the creation mechanism to cause the correct conversion. Or is there something wrong with the data format I sent?

Supplementary observations:

The structure tm_logof error situation is constructed as

Under normal conditions, it is constructed as