I’m trying to decode 4k, 25fps, h264 streams coming from different ip cameras (via RTSP) leveraging on NVIDIA Video Codec

With the following SW/HW technical specifications:

- Ubuntu 18.04 LTS

- FFmpeg version 3.4.8-0ubuntu0.2

- GStreamer version 1.16.1

- Cuda compilation tools, release 10.0, V10.0.130

- NVIDIA Video Codec SDK 8.2.16

- NVIDIA Driver Version: 460.32.03

- NVIDIA GeForce GTX 1060 6GB

- BOSCH MIC IP 7100i camera

- AXIS Q6128-E camera

I decided to try the samples that come with the official NVIDIA Video Codec SDK 8.2.16.

The first problem:

In particular I have tried to execute the AppDec example by using this video as an input.

The video has been recorded from the Bosch camera using Gstreamer (I tried also using FFmpeg and there are no differences in results).

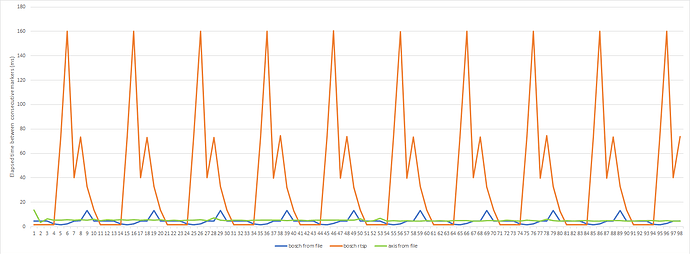

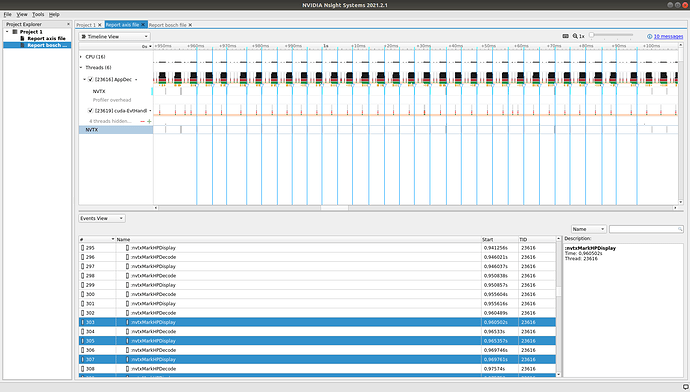

After a minimal modification to the AppDec in order to display the time interval between the decoding of each pair of consecutive frames, I noticed a periodical unexpected pattern that repeats each 10 frames (note that 10 is the distance between two keyframes).

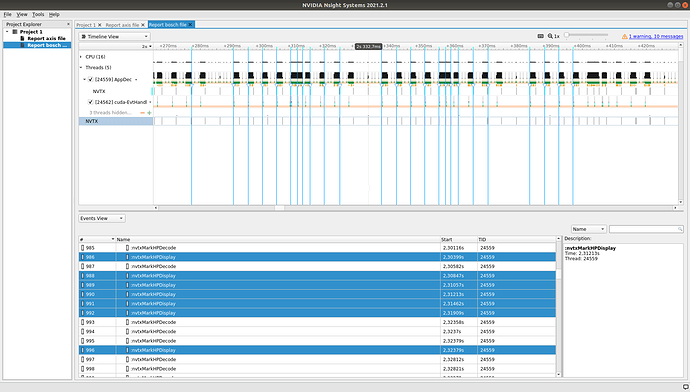

However, if I instead use this other video as an input, which has been recorded from the Axis, the execution produce always the same time intervals between two consecutive decoding as we expect.

The second problem:

Another problem rises when I try to use the rtsp stream as an input. In this case the behavior is wrong for both Bosch and Axis camera.

For the Bosch camera, I get the same unexpected pattern, but with new different time intervals because the camera streams at 25 fps.

For the Axis camera, the callback NvDecoder::HandlePictureDisplay(CUVIDPARSERDISPINFO *pDispInfo) has never been called. As a result I have always 0 decoded frames.

NB:

- The time intervals (in milliseconds) among consecutive decoding are shown in the attachment.

- I also tried using the last version of SDK (NVIDIA Video Codec SDK 11) with CUDA 11.2, but the results do not change and I have the same problems.

- Actually I’m developing on a GTX device, but I’m planning to deploy my application on a Lenovo Server: ThinkSystem SR650 powered by x2 NVIDIA Quadro RTX 4000 8GB PCIe Active GP. Thus it is very important to solve this problem first.

How could I manage these problems? Could be a bug of the Video Codec SDK?

In order to reproduce these issues, you can find the original code of the AppDec example with the only modification to print the time interval between two consecutive decoding frames here.

Thanks,

Simone