I am benchmarking the FrankaCubeStack and FrankaCabinet environments from the IsaacGymEnvs code.

I’m using the code on the main branch with the latest version as of today (specifically on this commit: Update release notes for 1.3.1 release · NVIDIA-Omniverse/IsaacGymEnvs@ca7a4fb · GitHub). I downloaded isaacgym and the setup.py file says it’s version 1.0.preview4.

A few questions:

(1) Has FrankaCabinet been benchmarked and reported in a paper? From checking the arXiv paper it has FrankaCubeStack but not FrankaCabinet.

(2) Comparing the two environments, when I run these commands:

python train.py task=FrankaCabinet headless=True

python train.py task=FrankaCubeStack headless=True

The first command takes 20 minutes to run. The second command takes 264 minutes, an order of magnitude more. These are all on the same machine where I use an NVIDIA GeForce RTX 3090 GPU. Is this running time expected? The config .yaml files show 4096 envs for the former and 8192 for the latter.

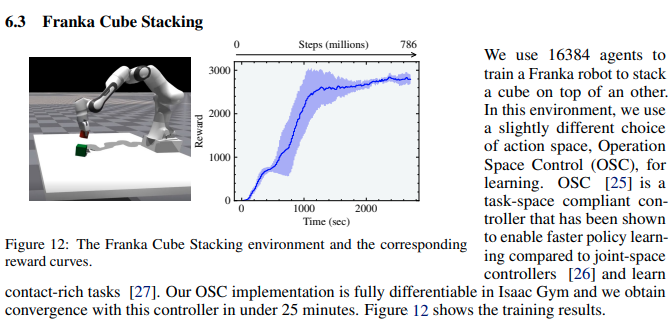

(3) Further, the reward curve for the FrankaCubeStack seems to peak at 800-ish. I followed-up on this GitHub issue report: FrankaCubeStack not converging · Issue #73 · NVIDIA-Omniverse/IsaacGymEnvs · GitHub by showing my reward curves for 2 different seeds. The reward curve seems different. (Edit: I originally also said the timing was different but now I realize the paper said “convergence […] in under 25 minutes” and if I were to double my parallel envs, I might actually get similar timing… so maybe ignore the timing comment)

(4) However the policies do seem to be resulting in some “good” stacking behavior, as I show in my video of the rollouts:

This is assuming the Franka does not need to let go of the cube.

My conclusion is that I think the code is working fine, it’s just that maybe the reward function in the environment might have changed or that the FrankaCabinet was introduced as an extra environment that we could just play around with.

Any comments on the above points would be greatly appreciated.

Thanks for the fantastic library!