Hi guys,

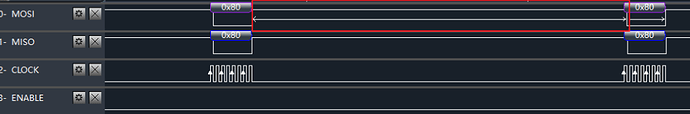

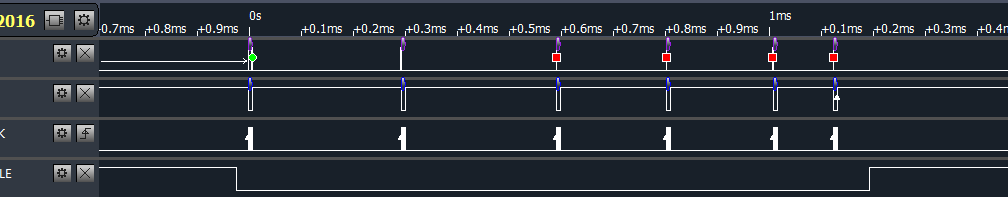

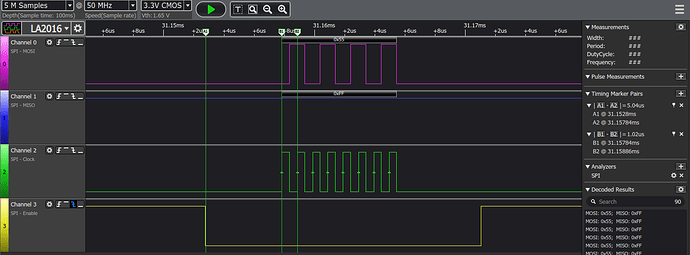

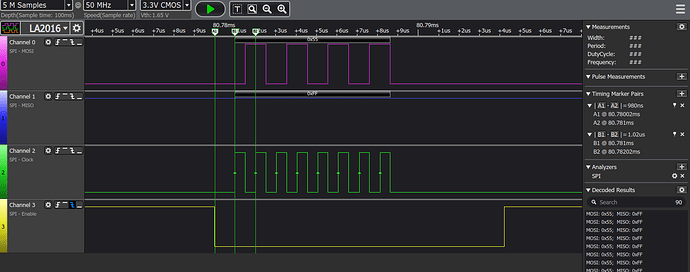

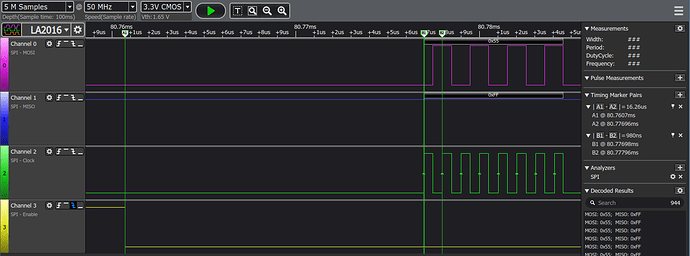

The MCU serving as a SPI slave is tested with a stm32 board. With SPI chip select to clock delay (CS delay for short) about 10ms on SPI master before transferring packet, the slave can receive data and ack data as required. But when SPI master switch to Nano dev kit,the MCU spi receives nothing except the second packet.

For example,

void transfer(int fd, unchar const *tx, unchar const *rx, size_t len)

{

int ret = 0;

static unchar bits = 8;

static uint speed = 1000000;

static ushort delay = 1000;

struct spi_ioc_transfer tr = {

.tx_buf = (unsigned long)tx,

.rx_buf = (unsigned long)rx,

.len = len,

.delay_usecs = delay,

.speed_hz = speed,

.bits_per_word = bits,

};

ret = ioctl(fd, SPI_IOC_MESSAGE(1), &tr);

if (ret < 1){

sc_debug("\n");

pabort("can't send spi message");

}

}

.....

unchar def_tx[6] = {0x80, 0x80, 0x80, 0x80, 0x80, 0x80};

unchar def_rx[6] = {0xff, 0xff, 0xff, 0xff, 0xff, 0xff};

transfer(fd, def_tx, def_rx, 6);

//sc_delayms(2);

sc_debug("\n");

def_tx[6] = {0x81, 0x81, 0x80, 0x80, 0x80, 0x80};

transfer(fd, def_tx, def_rx, 6);

sc_delayms(2);

def_tx[6] = {0x82, 0x82, 0x82, 0x82, 0x80, 0x80};

transfer(fd, def_tx, def_rx, 6)

The slave can only receive the second transfer data 0x81, 0x81, 0x80, 0x80, 0x80, 0x80. We want to try to use the same CS delay to find out the problem. How can we set the CS delay prior to every transfer beginning?

We had search the spi device tree document, and get below result relative to delay.

garret:~/nano/l4t32.3.1/kernel_src/kernel/kernel-4.9/Documentation/devicetree/bindings/spi$ ack delay

...

spi-fsl-dspi.txt

22:- fsl,spi-cs-sck-delay: a delay in nanoseconds between activating chip

24:- fsl,spi-sck-cs-delay: a delay in nanoseconds between stopping the clock

54: fsl,spi-cs-sck-delay = <100>;

55: fsl,spi-sck-cs-delay = <50>;

...

nvidia,tegra114-spi.txt

43:- nvidia,tx-clk-tap-delay: Delays the clock going out to the external device

45:- nvidia,rx-clk-tap-delay : Delays the clock coming in from the external

51:- nvidia,clk-delay-between-packets : Clock delay between packets by keeping

But it seem the nvidia,tegra114-spi.txt have no CS delay property as what we need.

How could we settle this problem? Must we need to change the spi driver code?

.

.