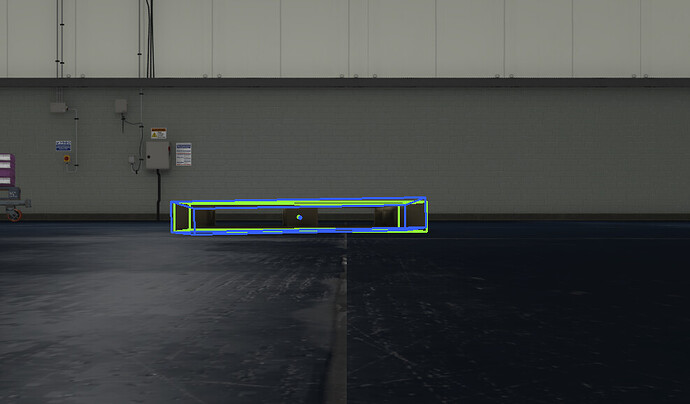

I’m working on object pose detection - and am creating a dataset in the linemod format so that I can train EfficientPose. Similar to the post Syntheitic data recording for BBox3D I’ve had to do some ‘changes’ to get my data. Basically ‘all’ is working except for the transform between my camera and my object. I need this to a) visualize the bbox3D - where I’m at now, and b) drive the model (it needs to transform the CAD object.)

I can see how to get the world poses (I think) for my two objects:

cam_pose,cam_trans,cam_rot = self.getWorld("/World/Camera/BotCamera")

pal_pose,pal_trans,pal_rot = self.getWorld("/World/Euro_Pallet1")

rel_pose = pal_pose - cam_pose # ????

getWorld defined at end… this is from code somewhere in here I found…

Forgive me for my weakness here - but how do I get the relative pose - from the camera to the object? Above (subtraction) works for transformations (row 4 of pose), but fails for the rotation component. I really want to create a rotation matrix that nicely rotates around the x, y, z axis.

Thanks!

def getWorld(self,prim_path):

timeline = omni.timeline.get_timeline_interface()

timecode = timeline.get_current_time() * timeline.get_time_codes_per_seconds()

stage = omni.usd.get_context().get_stage()

curr_prim = stage.GetPrimAtPath(prim_path)

pose = omni.usd.utils.get_world_transform_matrix(curr_prim, timecode)

trans = pose.ExtractTranslation()

trans = np.array(trans)

abs_rotation = Gf.Rotation.DecomposeRotation3(pose, Gf.Vec3d.XAxis(), Gf.Vec3d.YAxis(), Gf.Vec3d.ZAxis(), 1.0)

abs_rotation = [

Gf.RadiansToDegrees(abs_rotation[0]),

Gf.RadiansToDegrees(abs_rotation[1]),

Gf.RadiansToDegrees(abs_rotation[2]),

]

abs_rotation = np.array(abs_rotation)

return pose,pose.ExtractTranslation(),abs_rotation